The Story: Nvidia's Uncomfortable Truth About AI Economics

Nvidia's Chief Financial Officer recently made a statement that challenges the prevailing narrative around artificial intelligence and labor replacement. According to the executive, the cost of compute for running AI workloads now exceeds the cost of paying human workers to do the same job. This is a significant admission from one of the world's most powerful chip manufacturers—one that stands to profit enormously from increased AI adoption. Yet here they are, acknowledging that the math on AI-as-replacement-for-labor simply doesn't work at current price points.

The statement cuts through years of hype about AI eliminating jobs and automating entire industries. Instead, it reveals a more complex reality: artificial intelligence may be technologically capable of replacing human work, but economically, it isn't there yet. For many real-world applications, it remains cheaper to hire a person than to run the necessary computational models.

Why This Matters: The Economics Inflection Point

This admission represents a critical inflection point in how we should think about AI's actual impact on the economy and labor markets. The narrative has been dominated by two opposing camps: AI optimists predicting massive productivity gains and job displacement, and skeptics arguing AI is overhyped. This statement from Nvidia suggests a more nuanced reality.

The GPU cost problem is real and systemic. Training and running large language models requires enormous computational power. These models demand specialized hardware—GPUs and TPUs—that are expensive to purchase, maintain, and operate. The energy costs alone are staggering. A single training run for a large model can consume megawatts of electricity and generate thousands of dollars in computing bills. For inference—using a trained model to make predictions—the costs scale with usage. Every query to an AI system has a real computational cost attached to it.

When you factor in infrastructure, cooling, data center real estate, and specialized engineering talent to maintain these systems, the total cost of ownership for AI workloads becomes astronomical. In many cases, paying a skilled human worker—who needs salary, benefits, and office space—is genuinely cheaper than running the AI alternative.

This matters because it fundamentally changes business incentives. Companies cannot simply adopt AI to cut labor costs if the math doesn't work. Startups that have built entire business models around AI-as-replacement need to reconsider their unit economics. Investors backing AI-first companies need to ask harder questions about whether the ROI actually materializes.

The statement also matters geopolitically and strategically. If compute costs remain prohibitively expensive, it concentrates AI capabilities among well-capitalized enterprises and wealthy nations that can afford the infrastructure. This shapes who controls AI development and deployment, with serious implications for economic competition and technological sovereignty.

The Root Causes: Why Compute Costs Exploded

Several factors have driven compute costs to unsustainable levels. First, model sizes have grown exponentially. Larger models perform better, but they require proportionally more computational resources. This creates a treadmill where capability gains come at the cost of higher resource consumption.

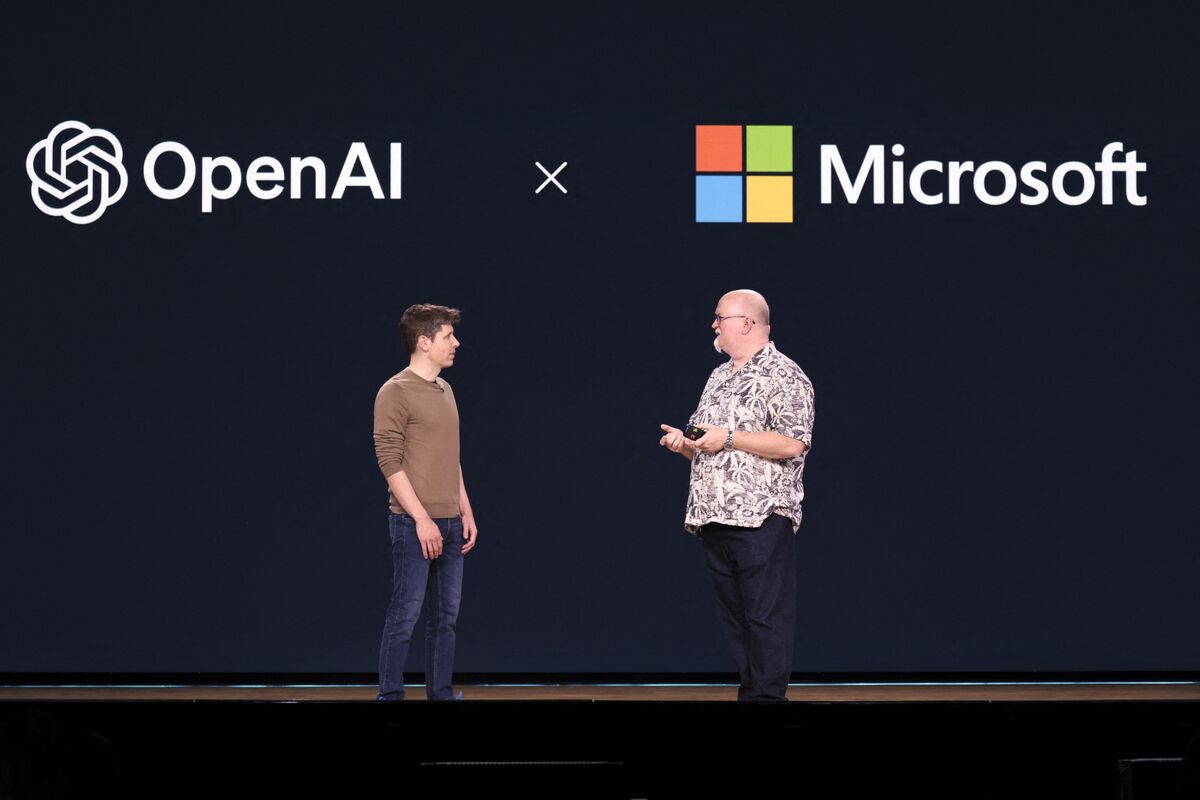

Second, GPU prices themselves have remained elevated. Nvidia dominates the market for AI-capable chips, and demand has far outpaced supply. While chip production has ramped up, prices have stayed high because demand is insatiable. Data centers, cloud providers, and enterprises all compete for limited GPU inventory.

Third, energy costs have become a primary concern. Running data centers that power AI systems consumes massive amounts of electricity. In regions with expensive power, this becomes a major line item in operational budgets. As AI adoption scales, energy consumption scales with it, pushing electrical costs higher.

Fourth, there's the hidden cost of infrastructure. Deploying AI at scale requires sophisticated data center operations, networking, storage systems, and specialized engineering teams to manage it all. These overhead costs are often invisible in headlines about AI but very real in practice.

What This Means: The Shift Toward Efficiency

If Nvidia's CFO is right, the future of AI likely belongs to efficiency, not scale. This suggests a fundamental strategic shift in how companies approach artificial intelligence.

Smaller, specialized models trained for specific tasks will outcompete massive general-purpose models when cost is factored in. A company might develop a focused model that solves one specific problem very well, using a fraction of the computational resources required by a large language model attempting to do everything.

Edge computing—running AI models on devices rather than in cloud data centers—becomes more attractive. Processing data locally reduces transmission costs and latency while dramatically cutting the scale of centralized compute infrastructure needed.

Optimization becomes a core competitive advantage. Companies that can squeeze more performance from less compute will have lower costs and better margins. This incentivizes research into more efficient algorithms and architectures rather than simply scaling existing approaches.

Human-AI collaboration may prove more cost-effective than pure automation. Rather than replacing workers, companies might use AI to augment human capabilities, letting people focus on high-value tasks while AI handles routine components. This hybrid approach could deliver better economics than either pure human labor or pure AI automation.

What To Do: Strategic Implications

For business leaders, the takeaway is clear: scrutinize AI ROI claims carefully. Don't assume AI is cheaper simply because it's automated. Run the actual numbers on compute costs, energy usage, and total cost of ownership.

For investors, apply skepticism to business plans that depend on labor replacement as the primary value driver. Instead, look for companies solving efficiency problems or creating genuinely new capabilities that weren't possible before.

For technologists, the signal is to focus on efficiency. The winners will be those building smaller, faster, cheaper models—not necessarily bigger ones.

The era of assuming AI will automatically replace labor is ending. The real story is just beginning.

Now you know more than 99% of people. — Sara Plaintext