Claude Opus 4.7 setup guide for builders (Claude Code, Cursor, Zed, API, Bedrock, Vertex)

Claude Opus 4.7 is live, and the practical question is not “is it good?” It’s “what exact line do I change in each tool so my team can use it today without breaking workflows?”

This guide is for people already shipping with previous Claude models. You’ll get the concrete model switch for each major surface: Claude Code, Cursor, Zed, direct API, Amazon Bedrock, and Google Cloud Vertex AI.

Use this rollout mindset: pin the new model explicitly, keep rollback to your previous model, test on real tasks, then expand traffic. Opus 4.7 is a direct upgrade, but instruction-following and token behavior can differ enough to justify a staged migration.

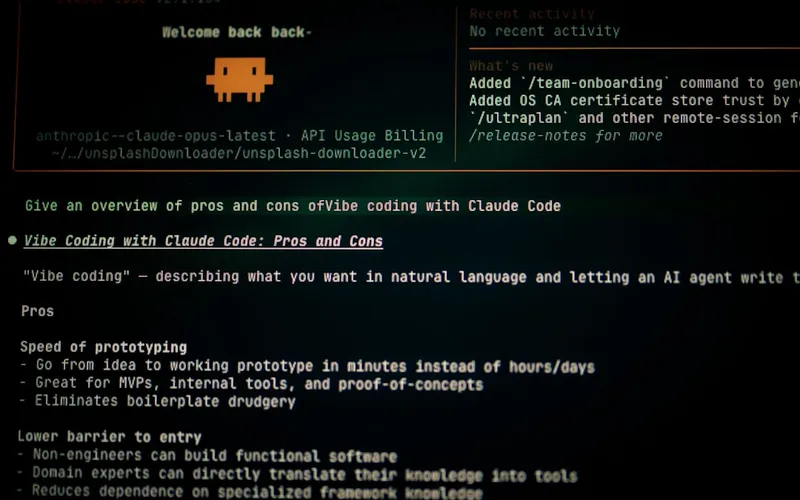

Claude Code

Claude Code is usually the fastest place to feel Opus 4.7 gains on long-running coding tasks. The key changes are selecting Opus 4.7 and choosing an effort level that matches task difficulty.

Anthropic introduced an xhigh effort level between high and max. Start with high for normal coding and xhigh for nasty debugging, refactors, or multi-file architecture work.

{

"model": "claude-opus-4-7",

"effort": "xhigh",

"fallback_model": "claude-opus-4-6",

"max_output_tokens": 2200

}If your Claude Code environment supports slash commands, use /model claude-opus-4-7 at session start and keep a rollback command ready. For deep code review, try the new /ultrareview flow on high-risk PRs.

Cursor

In Cursor, the important part is setting Opus 4.7 as the model for agent/composer workflows while keeping a cheaper default for lightweight completions if needed. Teams that route everything to one frontier model usually overspend.

Set Opus 4.7 for complex edits, codebase reasoning, and multi-step agent tasks. Keep a secondary model for low-stakes generation.

{

"cursor": {

"models": {

"primary_agent": "claude-opus-4-7",

"fallback_agent": "claude-opus-4-6",

"lightweight": "claude-sonnet-4-6"

},

"defaults": {

"effort": "high",

"temperature": 0.2

}

}

}After switching, re-test your most common prompts. Opus 4.7 tends to follow instructions more literally, so old prompts with contradictory constraints can produce “technically correct but unexpected” outputs.

Zed

Zed users should treat this as a model-ID update plus provider validation step. Confirm your Anthropic provider key and model pin are both set, then run a quick real-file edit test before team-wide rollout.

Use explicit model pinning instead of “latest” aliases so your behavior doesn’t drift silently.

{

"language_models": {

"anthropic": {

"api_key": "${ANTHROPIC_API_KEY}",

"model": "claude-opus-4-7",

"fallback_model": "claude-opus-4-6"

}

},

"assistant": {

"default_model": "claude-opus-4-7"

}

}If Zed is shared across a team, add the model switch to your onboarding docs and keep one canonical config to avoid half the team accidentally testing on older models.

Anthropic API (direct)

For direct API usage, the core change is simple: set model to claude-opus-4-7. Then tune effort, token budgets, and output caps for your workload.

Pricing remains the same as Opus 4.6, but effective spend can still move due to tokenization differences and longer reasoning on higher effort settings. Track cost per successful task, not per request.

curl https://api.anthropic.com/v1/messages \

-H "x-api-key: $ANTHROPIC_API_KEY" \

-H "anthropic-version: 2023-06-01" \

-H "content-type: application/json" \

-d '{

"model": "claude-opus-4-7",

"max_tokens": 2200,

"messages": [

{"role": "user", "content": "Refactor this module and add tests."}

]

}'Recommended production pattern:

{

"model": "claude-opus-4-7",

"fallback_model": "claude-opus-4-6",

"effort": "high",

"max_output_tokens": 2200,

"task_budget_tokens": 12000,

"timeout_ms": 90000,

"retry": { "max_attempts": 2 }

}Amazon Bedrock

Opus 4.7 is available through Bedrock. In practice, you’ll update the model identifier in your Bedrock invocation config and keep region/access policies aligned with your existing Anthropic setup.

Because Bedrock deployments differ by account and region, verify the exact model ID string shown in your Bedrock console for Anthropic Claude Opus 4.7, then pin that exact value in code.

{

"bedrock": {

"provider": "anthropic",

"model_id": "anthropic.claude-opus-4-7",

"fallback_model_id": "anthropic.claude-opus-4-6",

"inference": {

"maxTokens": 2200,

"temperature": 0.2

}

}

}After changing IDs, run a smoke test on your top 5 production prompts and compare latency, tool reliability, and token usage against your current baseline.

Google Cloud Vertex AI

Opus 4.7 is also available in Vertex AI. The migration is the same pattern: pin the model version explicitly, preserve rollback, and measure task-level economics before full cutover.

In Vertex, model naming can be versioned by publisher and endpoint format. Confirm the exact Opus 4.7 model path in your project’s Model Garden or partner model listing, then use that full identifier in deployment config.

{

"vertex_ai": {

"publisher": "anthropic",

"model": "claude-opus-4-7",

"fallback_model": "claude-opus-4-6",

"generation_config": {

"temperature": 0.2,

"max_output_tokens": 2200

},

"safety": {

"enforce_platform_policies": true

}

}

}If you run agent workflows on Vertex, add per-task routing so only high-complexity jobs hit Opus 4.7. That preserves quality gains without forcing premium model costs onto trivial requests.

Common gotchas across all tools

Most migration issues are operational, not model quality problems.

{

"gotchas": [

"Forgetting fallback model and losing instant rollback",

"Assuming old prompts behave the same under stricter instruction following",

"No token budget caps for long-running agent loops",

"Treating posted price as total-cost guarantee",

"Using one model for every task instead of routing by difficulty"

]

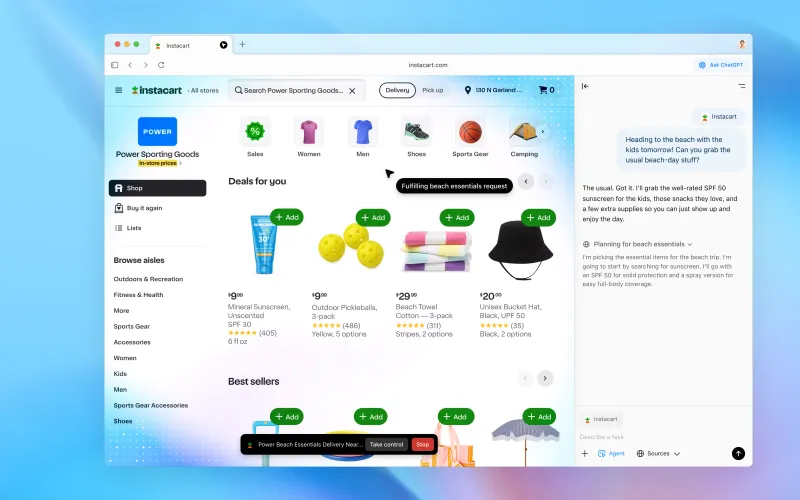

}Also remember the vision upgrade: Opus 4.7 can process higher-resolution images, which is great for dense diagrams and screenshots, but can increase token usage. Downsample when pixel-level detail is unnecessary.

Recommended rollout plan (quick and safe)

Use a two-phase rollout for every tool above.

{

"phase_1": "10% traffic on complex tasks only, 48 hours, full logging",

"phase_2": "expand to 30-50% where pass-rate and correction-time improve",

"rollback_trigger": "cost spike >20% without quality lift, or parser/tool regression"

}Success metrics to watch: task completion rate, human correction time, tool-call error rate, latency p95, and tokens per successful outcome.

Bottom line

Claude Opus 4.7 is straightforward to enable across Claude Code, Cursor, Zed, the Anthropic API, Bedrock, and Vertex. The one-line model change is easy; the real win comes from routing and controls.

Pin claude-opus-4-7, keep a fallback, set effort and budget caps, and validate with real production tasks. Teams that do this get the capability lift without the migration chaos.

Now you know more than 99% of people. — Sara Plaintext