Claude Opus 4.7 upgrade guide (for teams already on Opus 4.6)

If you’re already shipping with Claude Opus 4.6, this is a fast upgrade. The short version: Opus 4.7 is a direct replacement with the same posted price, but behavior and token usage can shift enough to break sloppy rollouts.

The biggest wins are in hard coding tasks, long-running agent workflows, instruction-following, and high-resolution vision. The biggest risks are prompt brittleness, token-count surprises, and overusing high-effort modes without budget controls.

Here’s the clean 5-minute path.

Step 1: Change the model ID first (and keep rollback)

- Update model ID from your Opus 4.6 value to

claude-opus-4-7. - Do not remove your old model ID yet; keep Opus 4.6 as fallback.

- Roll out behind a feature flag or percentage gate so you can revert instantly.

{

"llm": {

"primary_model": "claude-opus-4-7",

"fallback_model": "claude-opus-4-6",

"rollout_percent": 10

}

}Do this before prompt tuning. If the ID is wrong, every downstream test is noise.

Step 2: Update settings.json/config for effort and budgets

Opus 4.7 adds a new xhigh effort level (between high and max). That’s useful for hard tasks, but it can increase output tokens in long agent loops. Set controls now, not after your bill spikes.

- Add explicit effort defaults per workflow.

- Set task budgets (if using Claude Platform task budgets beta).

- Cap max output tokens for agent turns.

- Set timeout and retry values for long-running jobs.

{

"model": "claude-opus-4-7",

"effort": "high",

"max_output_tokens": 2200,

"task_budget_tokens": 12000,

"timeout_ms": 90000,

"retry": {

"max_attempts": 2,

"backoff_ms": 750

},

"routing": {

"hard_debugging": {

"model": "claude-opus-4-7",

"effort": "xhigh"

},

"simple_qa": {

"model": "claude-sonnet-4-6",

"effort": "medium"

}

}

}If you only do one thing here, add routing. Don’t pay Opus 4.7 prices/latency for low-complexity work.

Step 3: Expect these behavior changes (they can look like regressions)

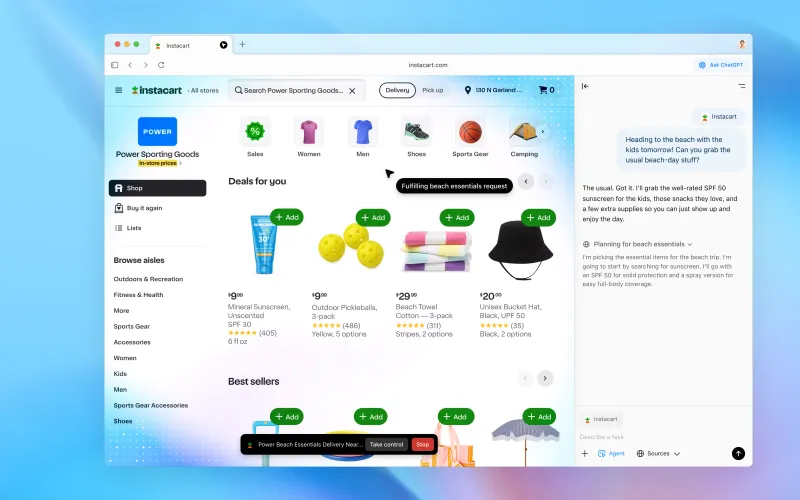

Anthropic explicitly says instruction-following is more literal in Opus 4.7. That sounds great, but it can break prompts written for models that used to “interpret loosely.”

- Literal instruction execution: old prompts with conflicting constraints may now produce unexpected but technically correct outputs.

- Different tokenization: same input can map to roughly

1.0x–1.35xtokens depending on content. - More autonomous reasoning at higher effort: higher reliability on hard tasks, but potentially more output token usage.

- Higher-fidelity image processing: better visual performance, but larger image token costs if you don’t downsample.

These aren’t bugs. They’re upgrade-side effects you need to absorb in config and prompt design.

Step 4: Re-test your prompts and parsers before full rollout

- Run top 20–30 production prompts on Opus 4.6 vs Opus 4.7.

- Track pass/fail, human correction time, tool-call error rate, and tokens per successful task.

- Specifically test multi-turn workflows where earlier models tended to drift, loop, or stop halfway.

- Validate structured output parsing (JSON/schema checks) against stricter instruction behavior.

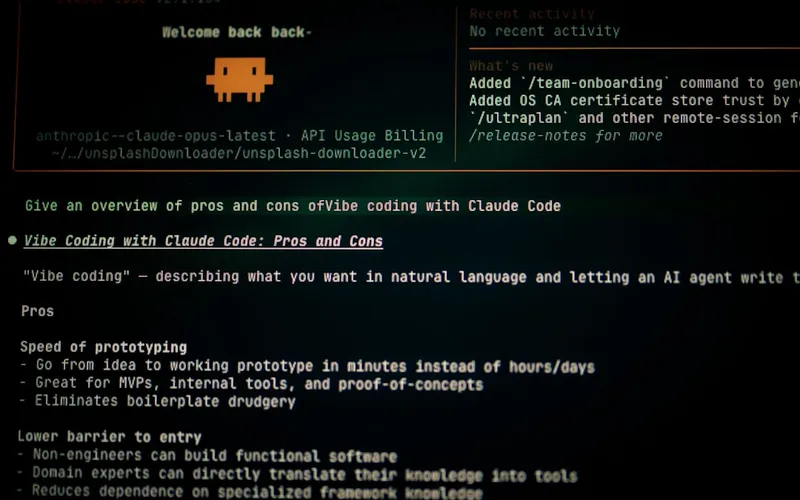

Anthropic and partners reported strong deltas (for example, CursorBench 70% vs 58% and notable tool-error reductions in agent workflows), but your stack is what matters. Trust your eval harness over launch-day excitement.

Breaking changes and gotchas teams hit in week one

- Hidden model mismatch: API service upgraded, background workers still pinned to old model ID.

- Prompt over-constraint: legacy prompts with too many nested rules cause rigid or verbose outputs.

- Budget blindness: no task budget caps in agentic flows leads to runaway spend.

- Parser fragility: regex-only extraction breaks when output wording improves but format shifts.

- Vision overspend: sending full-resolution screenshots when cropped/downsampled images are enough.

- Cyber policy surprises: security-oriented workflows may hit new safeguards; plan for legitimate-use verification paths if relevant.

Cost impact: same rate card, different real bill

Posted pricing stays the same as Opus 4.6: $5/M input, $25/M output. That does not guarantee identical spend.

Your effective cost can rise if tokenization expands on your content type, if effort defaults are too high, or if longer autonomous chains produce more output tokens. Your effective cost can also drop on some tasks if higher accuracy reduces retries and human fixes.

So measure cost per successful task, not cost per request. That’s the only metric that captures reliability gains.

When NOT to upgrade yet

- You don’t have rollback controls and cannot risk workflow variance this sprint.

- You lack token/cost observability at task level.

- Your prompts are brittle and parser-dependent, and you can’t allocate time for retuning.

- Your workload is mostly simple Q&A where Opus 4.7’s gains won’t justify spend.

- You’re in a compliance freeze and cannot re-validate behavior changes immediately.

If two or more apply, run a contained pilot first.

Recommended rollout plan (fast and sane)

- Day 1: Enable

claude-opus-4-7for internal users only, with 100% logging. - Day 2: Route 10% of complex coding/agent traffic to Opus 4.7 at

higheffort. - Day 3: Test

xhighonly on hardest tasks and compare success-per-token. - Day 4: Tune prompts for literal compliance; fix parser edge cases.

- Day 5: Expand to 30–50% where quality lift is clear and budget impact is acceptable.

Bottom line

Claude Opus 4.7 is a real upgrade for teams doing difficult software engineering and long-horizon agent work. The model ID switch is easy; the operational migration is where teams win or lose.

Do the basics: pin claude-opus-4-7, keep fallback, add effort and budget controls, retune brittle prompts, and watch task-level economics. If your workload is complex and high-value, upgrading now is usually worth it. If your workload is simple and cost-sensitive, wait and route selectively.

Don’t treat this as a blind model bump. Treat it like an infra change with product impact, and you’ll get the upside without the chaos.

Now you know more than 99% of people. — Sara Plaintext