Microsoft and OpenAI end their exclusive and revenue-sharing deal

Well, well, well. The tech industry's most dramatic power couple just filed for divorce, and honestly, we didn't see this coming from a mile away. Just kidding, we absolutely did. Microsoft and OpenAI ending their exclusive revenue-sharing deal is like watching two people realize they can make more money yelling at each other than holding hands. The 899 points and 767 comments suggest everyone's got popcorn ready for this soap opera.

Let's be real: this breakup was inevitable. Microsoft threw billions at OpenAI, got exclusive access to GPT models, and now that the AI gold rush is in full swing, both parties probably realized they'd be richer fighting over the same market than pretending to be exclusive partners. OpenAI gets to whore itself out to Google, Meta, and literally everyone else. Microsoft gets to pivot to its own AI ambitions without holding hands with a company that's increasingly feeling like a liability.

The real tea? This deal ending means OpenAI is officially out of Microsoft's pocket and into the wild west of AI competition. Expect faster innovation, pricier APIs, and a lot more chaos. Microsoft will probably regret this the moment OpenAI signs a juicier deal with someone else—but hey, that's what happens when you think you can own the future. Spoiler alert: you can't.

Rating: 8.5/10 for entertainment value. Pure corporate drama masquerading as business strategy.

GitHub Copilot is moving to usage-based billing

Well, well, well. GitHub just pulled the classic Silicon Valley move: "We're making it more fair and transparent!" Translation: Your free lunch is getting a price tag. Copilot's switching from a flat $10/month subscription to usage-based billing, which sounds reasonable until you realize you're about to discover how expensive your coding habits really are. It's like finding out your electricity bill scales with how many times you refresh your browser.

The 704 upvotes and 513 comments suggest the developer community is having *thoughts* about this. And honestly? Fair. Developers got addicted to unlimited AI-powered code completion, and now GitHub's essentially saying "that'll be $0.10 per 1K tokens, thanks." Nobody likes discovering their favorite tool has a meter on it. The real question is whether this drives people to Claude, ChatGPT's API, or one of the increasingly scrappy open-source alternatives.

Here's the thing though—if the pricing is actually reasonable and transparent, this might not be the villain origin story we're making it out to be. Usage-based can actually be cheaper for casual users than $10/month. But the devil's in the details, and until everyone's tried it and reported back with their horror stories, the internet will assume the worst. Classic tech drama.

Rating: 7/10 – Solid business move that'll absolutely rattle some cages. The engagement numbers prove people care enough to argue about it, which means GitHub's at least relevant enough to upset.

4TB of voice samples just stolen from 40k AI contractors at Mercor

Well, well, well. Somebody's having a very expensive Tuesday. A 4TB heist of voice samples from 40,000 AI contractors at Mercor is the kind of breach that makes cybersecurity teams reach for the hard liquor at 10 AM. That's not just data—that's the raw material of human voice, which in 2026 is basically digital gold. Someone just scored what amounts to a voice actor unemployment line in one fell swoop.

The real kicker? This isn't some faceless corporation getting pinched. These are contractors—actual humans who sold their vocal chords to the AI industrial complex, probably thinking their voices were in a secure vault somewhere. Spoiler alert: they weren't. 4TB suggests we're talking thousands of hours of audio samples, which means whoever pulled this off just inherited a library that could train voice models, commit audio fraud, or make extremely convincing deepfakes. Neat.

The 551 upvotes and 209 comments tell you everything about the current zeitgeist: people are simultaneously horrified and unsurprised. This is peak 2026 energy. We're past the shock phase of data breaches and deep into the "oh no, anyway" phase of collective digital paranoia. Mercor's probably drafting the most spectacular apology email known to humanity while their legal team contemplates the heat death of the universe.

Rating: 7/10 breach—genuinely consequential for the victims, perfectly timed social media fodder, but not quite "global financial system hiccup" tier. Still, rough day to be in voice AI.

OpenAI available at FedRAMP Moderate

OpenAI just cleared FedRAMP Moderate, which is basically the government saying "yeah, we'll let this AI tool into our sandbox." For those who don't speak bureaucracy, FedRAMP Moderate means OpenAI's infrastructure meets federal security standards—no more sketchy basement server situation. It's the kind of certification that makes government agencies less nervous about feeding sensitive data into ChatGPT, which is honestly a pretty big deal when you're talking about federal operations.

The timing is chef's kiss. While everyone's arguing about AI regulation, OpenAI quietly did the boring, unsexy work of compliance and security audits. No drama, no congressional hearings, just solid infrastructure that the feds can actually trust. This opens the door for government agencies to use OpenAI's tools without treating them like they're accessing the dark web from a library computer.

Here's the real play though: this is how you win enterprise and government deals. Not with flashy demos or viral moments, but with certifications nobody gets excited about until they actually need them. FedRAMP Moderate won't break the internet, but it might just be the unsexy foundation that lets AI actually matter in places that actually matter.

Rating: 7/10 — Solid infrastructure move that's way more important than it sounds, but it's not exactly the kind of story that gets people out of their chairs.

The next phase of the Microsoft OpenAI partnership

Microsoft and OpenAI just announced they're taking their partnership to the next level, and honestly? It's giving "tech power couple that actually works." Instead of the usual drama-filled splits we see in Silicon Valley, these two are doubling down with a fresh multi-year, multi-billion dollar commitment. Microsoft gets deeper integration with Azure, OpenAI gets the compute infrastructure to actually keep scaling their models, and we all get to watch the most consequential AI infrastructure play in tech right now unfold in real time.

What's actually clever here is how this structure sidesteps the awkwardness that usually kills tech partnerships. OpenAI stays independent (keeps its board, keeps its mission), Microsoft gets preferred access and commercial rights, and both avoid the "who owns what" death spiral that kills most deals. It's basically the friendship bracelet version of a business arrangement—everyone gets to keep their identity while benefiting from the other's strengths.

The timing is *chef's kiss* because it lands when the AI industry is collectively sweating about compute costs and the actual capital required to stay competitive. OpenAI basically said "we need a best friend with a credit card and world-class infrastructure," and Microsoft basically said "say no more." Whether this accelerates AGI, democratizes AI, or just makes both companies richer remains to be seen, but from a pure partnership engineering standpoint? This is how you actually do it.

Rating: 8/10 — Solid strategic move, excellent execution, but the real story won't be told for years.

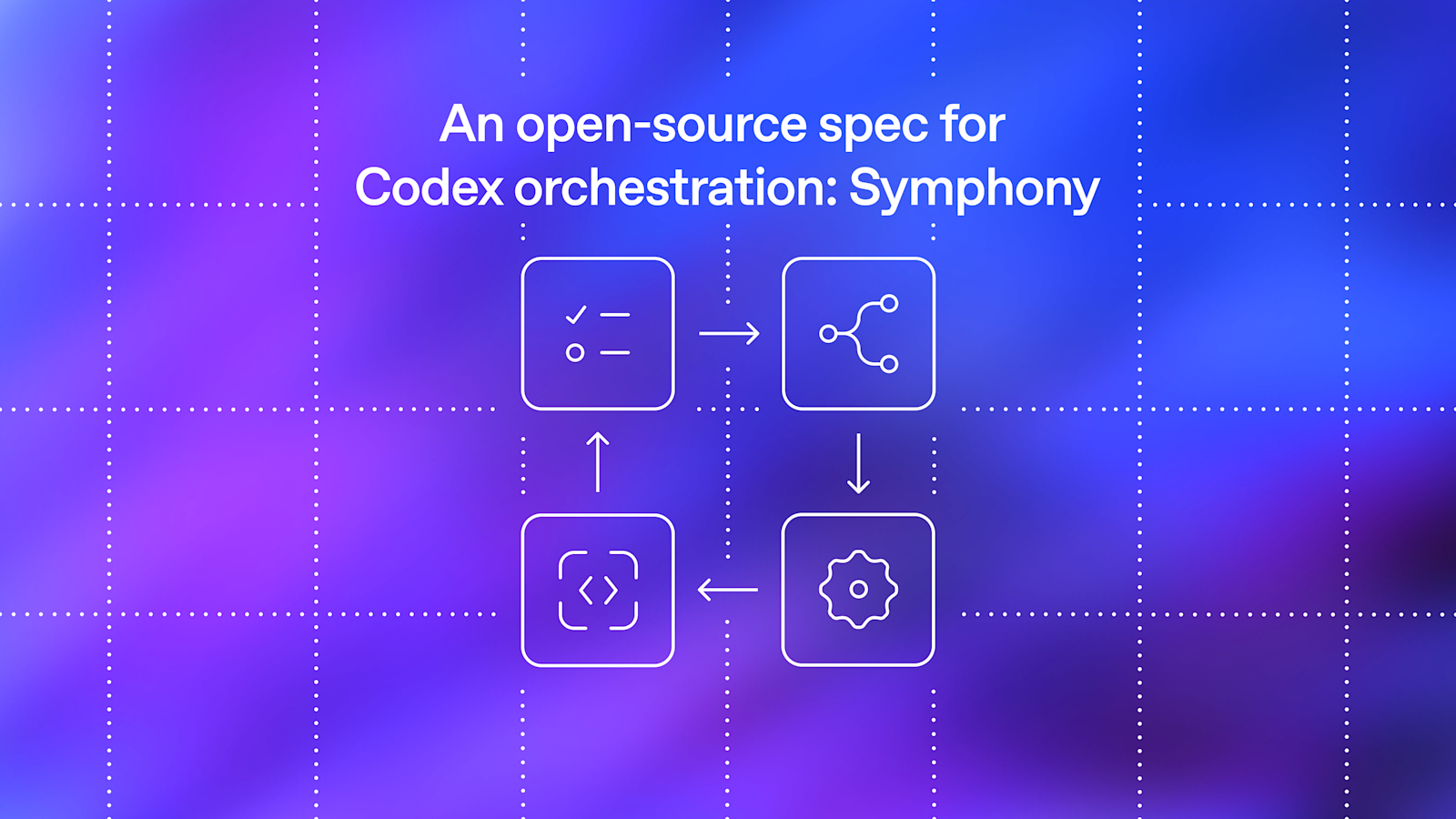

An open-source spec for orchestration: Symphony

Listen, if there's one thing the AI world loves more than another AI announcement, it's a *framework* for managing all those AIs. Enter Symphony, OpenAI's latest gift to the open-source community—essentially the conductor's baton for your AI orchestra. Because apparently we've reached the point where we need orchestration specs for orchestration. Meta? Absolutely.

The beauty here is that Symphony isn't trying to be another proprietary lockdown. It's genuinely open-source, which means developers can actually tinker with how their AI models play together without waiting for someone's quarterly product roadmap. It's like finally giving musicians sheet music instead of just hoping they'll figure out harmony on their own.

The real test? Whether it actually catches on or becomes another GitHub skeleton. The spec looks solid and solves a real problem—coordinating multiple AI systems is genuinely messy right now. If Symphony becomes the de facto standard, this could be a legitimately useful move. If it's just OpenAI's way of staying relevant in the open-source conversation? Still pretty savvy. Rating: 7.5/10—smart release, practical value, but we'll see if the community actually adopts it.

Choco automates food distribution with AI agents

Choco just dropped an AI plot twist that actually makes sense: instead of AI going full Skynet, it's out here solving the unglamorous problem of getting fresh food to restaurants faster. Using OpenAI's agents, they've built a system that hunts down deals, negotiates with suppliers, and handles logistics without a human having to manually text 47 different vendors at 6 AM. It's like giving restaurant owners a tireless digital sommelier, except for produce.

The real flex here is that this solves a genuine problem in an industry notorious for waste and inefficiency. Restaurants waste money, customers get better availability, suppliers move inventory faster—everyone wins. No need for sci-fi drama or philosophical hand-wringing about artificial consciousness. Just good old-fashioned automation doing exactly what it should: making a broken system slightly less broken.

This is the kind of AI story that actually deserves attention because it's boring in the best way possible. It's not generating art or writing poetry—it's automating the tedious middle-man stuff that wastes time and money. Rating: 7.5/10. Solid execution, meaningful impact, but let's be real—optimizing food supply chains isn't exactly going to go viral. Still, it's proof that AI's best use case might just be handling all the stuff we never wanted to do anyway.

Our principles

OpenAI's "Our Principles" page reads like a fortune cookie designed by a philosophy major who just discovered LinkedIn. They're committed to "safe AI," "beneficial AI," and ensuring their technology serves "humanity"—which is great, truly! It's the kind of statement that makes you nod along while wondering what happens when these lofty ideals meet the messy reality of billion-dollar business incentives and quarterly earnings calls.

Here's the thing: the principles themselves aren't wrong. OpenAI clearly cares about responsible AI development, transparency, and accountability. But there's a delicious irony in publishing soaring ethical commitments while simultaneously racing to build AGI faster than your competitors, training models on scraped internet data, and operating in ways that remain opaque to the public. It's not necessarily hypocritical—it's just very human.

The real test of these principles isn't reading them on a sleek webpage; it's watching how OpenAI makes decisions when safety conflicts with speed, when ethics clash with market share, and when transparency requires admitting they don't have all the answers. Until then, this reads like a beautiful mission statement that deserves a solid 7/10 for ambition and a 5/10 for verifiability.

Join the new AI Agents Vibe Coding Course from Google and Kaggle

Google and Kaggle just dropped "Vibe Coding" into the digital universe, and honestly, the name alone had us doing a double-take. Are we learning to code or vibing at a music festival? Turns out it's an AI Agents intensive course launching in June 2026, which means Big G is betting serious money that developers want to ride the AI wave before the robots take over the beach. The collab between Google and Kaggle is basically the ultimate power couple move in the developer education space.

Here's the thing: if you've been sleeping on AI agents, this course is basically Google's way of saying "wake up, buddy." The timing is chef's kiss—right when everyone's scrambling to understand what AI agents actually do beyond sounding like sci-fi nonsense. Whether you're a seasoned dev or someone who Googled "what is an agent" five minutes ago, this intensive promises to get you up to speed on building, deploying, and probably breaking AI agents in the most responsible way possible.

The whole vibe (pun intended) screams accessibility meets cutting-edge. Google's throwing its weight behind making AI agent development less "MIT PhD required" and more "yeah, I can do this." For anyone looking to future-proof their skills or just ride the hype train with actual substance, this is worth bookmarking. Just don't expect to finish it while vibing—this one requires actual work. Rating: 8/10 for ambition and execution, minus points for the name making us question reality.

8 Gemini tips for organizing your space (and life)

Google just dropped a "spring cleaning with Gemini" blog post, and honestly? It's giving "ChatGPT told us to write something relatable." Eight tips for organizing your space using an AI chatbot—because apparently we've reached peak convenience when we need an algorithm to tell us to throw out our junk. The irony of using technology to help us deal with the clutter technology created is *chef's kiss*.

Look, the tips are probably solid—AI is basically a well-trained internet that won't judge you for asking it the same question three times. And sure, Gemini can help you brainstorm storage solutions or create decluttering lists. But let's be real: organizing your life still requires you to actually *do the thing*. No amount of AI prompting will fold your laundry or convince you that you don't need seventeen half-empty notebooks. That's all you, my friend.

The post is serviceable marketing dressed up as life advice. It's not bad, just... expected. Google's essentially saying "hey, our AI is helpful!" which, fair. But if you genuinely need an AI to tell you to organize your closet, maybe the real problem is you need a therapist, not Gemini. That said, if it gets people actually tidying up their spaces, we'll call it a win. **Rating: 6/10**—solid execution, predictable premise, mildly useful.

Stay sharp. — Max Signal