Microsoft's Copilot Just Became the AI Company We All Feared

Rating: 2.5/5 stars for developer respect, 5/5 for cautionary tale. Let's be direct: Microsoft auto-injecting "Co-Authored-by Copilot" into commits without explicit user consent is exactly the kind of corporate AI overreach that erodes developer trust at scale. This isn't a feature—it's a violation. Developers didn't opt into having their git history tagged with AI attribution they didn't authorize. The 575 Hacker News points and 249 comments screaming about this aren't background noise; they're a warning signal that enterprises treating developers like products instead of partners will face backlash.

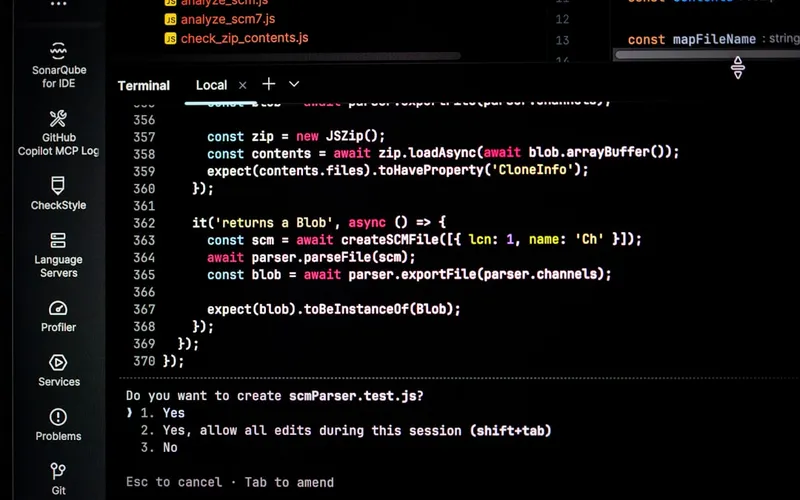

Here's what makes this particularly toxic: VS Code is supposed to be the neutral development platform, not a Trojan horse for AI vendor lock-in. When you silently modify someone's commit metadata, you're not just adding metadata—you're altering their professional record without consent. That's the kind of move that makes security-conscious teams rip Copilot out of their workflows entirely. Microsoft had one job: build trust with the developer community that actually makes their products valuable. Instead, they proved that AI-first companies will prioritize attribution metrics and adoption theater over respecting developer autonomy.

The business angle here is brutal for Microsoft but golden for competitors and transparency-first tooling vendors. Developers are waking up to the reality that AI assistance comes with hidden costs: surveillance, attribution creep, and corporate control over their work. This is the exact moment where an AI consulting firm or transparent AI solution provider can position developer trust as a moat. "Your code stays yours. Your commits stay honest. Your workflow stays yours." That's a pitch that lands harder now than it ever did before.

The real scandal isn't that Copilot exists—it's that Microsoft thought they could engineer consent out of existence. Every developer who discovered unauthorized "Co-Authored-by" tags in their commit history just learned a lesson about where their interests sit relative to AI vendors' growth metrics. This isn't the future of development; it's a masterclass in how to poison the well with a single poorly-considered feature. Rate this behavior what it is: a trust destroyer masquerading as convenience.

Stay sharp. — Max Signal