Rating: 8.5/10 - This is the most honest thing OpenAI has published in months.

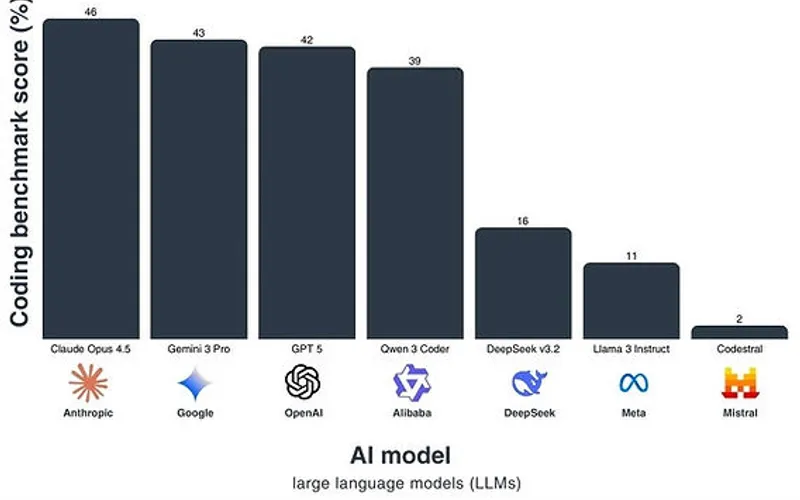

Let's cut through the PR speak. OpenAI just admitted that the coding benchmark everyone's been obsessing over is dead weight. And they're right. SWE-bench Verified saturation isn't a problem—it's proof the problem was never that hard to begin with.

The real hot take? We've been measuring the wrong thing. For two years, the AI industry circlejerked around "can it solve GitHub issues?" Like that's the ceiling of what software engineering requires. It's not. It's table stakes now. A freshman intern with GitHub Copilot can do it.

What actually matters—the stuff that separates frontier from commodity—is reasoning under uncertainty, architectural decision-making, understanding legacy codebases without documentation, and knowing when to refactor versus patch. These aren't coding tasks. They're judgment tasks. And we have almost zero benchmarks for them because they're hard to quantify.

The business signal here is brutal for the AI-dev-tools space: if you're still selling "writes code faster," you're selling yesterday's feature. The founders who are going to win are the ones building for the next layer up—tools that help engineers think through problems, not just autocomplete them.

What concerns me: OpenAI's pivot toward "complex reasoning" is vague. They haven't actually defined what they're measuring next. That's either because they're being cautious, or because they don't have a good answer yet. Probably both.

The 229 HN points and measured commentary suggest the community sees this as a watershed moment. It is. Just maybe not the way OpenAI wants you to frame it.

Stay sharp. — Max Signal