Qwen3.6-35B-A3B is the kind of release that makes “open model” stop sounding like a compromise and start sounding like a threat. My take: this is not just a good open-weight model drop, it’s a strategic punch to every closed AI vendor selling premium coding capability as if it’s permanently scarce.

The headline says “agentic coding power, now open to all,” and for once that slogan lines up with where teams actually hurt: repository-level reasoning, multi-step terminal workflows, and context-heavy development loops that break weaker models. If Qwen’s claims hold in messy real repos, this is less “cool benchmark week” and more “your default stack decision just got harder.”

The engagement tells the story. 1215 likes/points and 501 comments/retweets isn’t meme-viral fluff; it’s builder attention. Devs show up like this when a model might save them real time, real cost, and real vendor lock-in pain.

The celebration: open-weight coding models are now unquestionably in the top tier conversation

Let’s celebrate the obvious first: Qwen is shipping serious capability with open availability. That matters economically and politically. Economically, because teams can tune inference strategy, run through preferred frameworks, and optimize cost-performance. Politically, because “open to all” shifts leverage away from a handful of API gatekeepers.

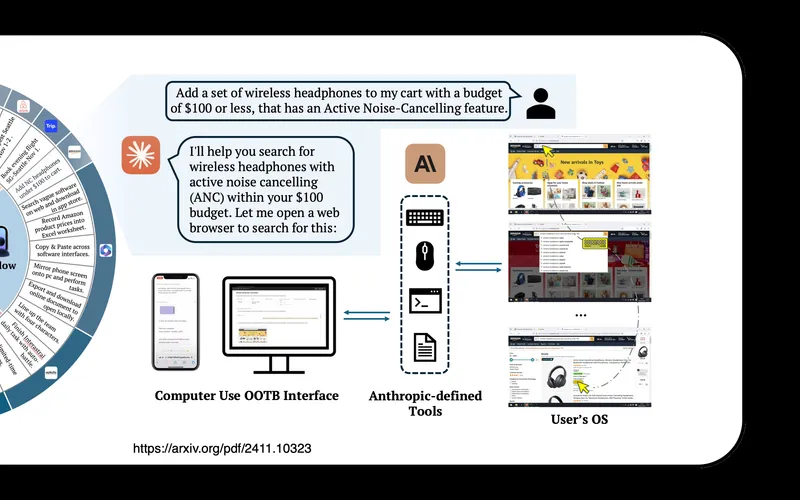

The technical profile is also not lightweight theater. Qwen3.6-35B-A3B is positioned as a MoE model with a high total parameter count but lower active footprint per token, which is exactly the balance teams want: strong quality without absurd compute burn on every request. Add very long context support and the model becomes much more practical for real codebase tasks where you need architecture memory, not just single-file autocomplete vibes.

I also like the emphasis on “thinking preservation” for iterative workflows. Anyone who has done serious agentic coding knows the most expensive failure mode is context amnesia: you spend 15 turns aligning on structure, then the model forgets a core constraint and starts improvising nonsense. If Qwen reduces that drift, it creates compounding value across long sessions.

And yes, the coding-benchmark positioning looks legitimately competitive across several agent-style tests. Even with generous skepticism, the directional signal is clear: this model is built to code in workflows, not just pass toy prompts.

The roast: benchmark swagger is still not production proof

Now the roast, because someone has to say it. AI release posts still drown readers in benchmark tables while dodging the metrics teams care about most: intervention rate, time-to-acceptable-output, failure recovery quality, and cost per completed task under real constraints.

“We scored X on benchmark Y” is fine for research chatter. But engineering leaders make decisions on throughput, reliability, and maintenance drag. If you want to claim “agentic coding power,” show week-over-week task closure deltas in mixed-difficulty repos, not just leaderboard screenshots and harness footnotes.

There’s also the classic open-model caveat: open weights don’t remove complexity, they relocate it. You now own serving, routing, security controls, model updates, prompt policy, eval governance, and observability. That’s freedom, yes. It’s also ops debt if your team isn’t ready.

And one more thing: “open to all” is a fantastic narrative, but enterprises still need compliance confidence, auditability, and support guarantees. Closed vendors will keep winning some deals simply by packaging certainty better, even if the raw model edge narrows.

The industry read: the moat is moving up the stack fast

Qwen3.6 reinforces the biggest trend in 2026 AI: base model quality is commoditizing faster than business models can admit. As open models get stronger at coding and agent behavior, closed vendors can’t rely on intelligence branding alone. Their moat has to be product reliability, governance, integrations, and enterprise execution.

For builders, this is good news. You can choose your stack by workload fit, budget, and control preferences instead of being forced into one “best model” mythology. The future is modular: different models for different jobs, smart routing, and aggressive eval discipline.

For startups building AI dev tools, the pressure just increased. If open models keep closing the quality gap, your differentiation can’t be “we call a good model.” It has to be workflow design, domain knowledge, and real operational outcomes.

For cloud providers and infra teams, this is also a gift. Strong open coding models drive demand for optimized serving, quantization pipelines, and enterprise-grade deployment tooling. Infrastructure becomes strategy again.

Max Signal scorecard

Agentic coding ambition: 9.2/10

Open ecosystem impact: 9.4/10

Likely real-world developer value: 8.8/10

Context/iteration handling promise: 8.9/10

Benchmark-to-reality confidence: 7.7/10

Out-of-box production readiness: 7.5/10

Strategic pressure on closed vendors: 9.3/10

Overall score: 8.9/10.

Final verdict: Qwen3.6-35B-A3B is a serious release with genuine market impact, not just open-source chest-thumping. Celebrate the progress, roast the benchmark theater, and then do the adult thing: run your own evals on your own repo workflows with real cost and reliability tracking.

If results match the promise, this model won’t just be “impressive for open.” It’ll be one of the default choices for coding agents, period. And that’s exactly why this launch matters: it pushes the whole industry toward better products, lower prices, and less platform captivity.

Stay sharp. — Max Signal