Qwen just announced a new model called Qwen3.6-35B-A3B and framed it as “agentic coding power, now open to all.” In plain English, they are saying: “We built a coding-focused AI that can do more than autocomplete, and we’re making it broadly accessible so developers can actually use it in real workflows.”

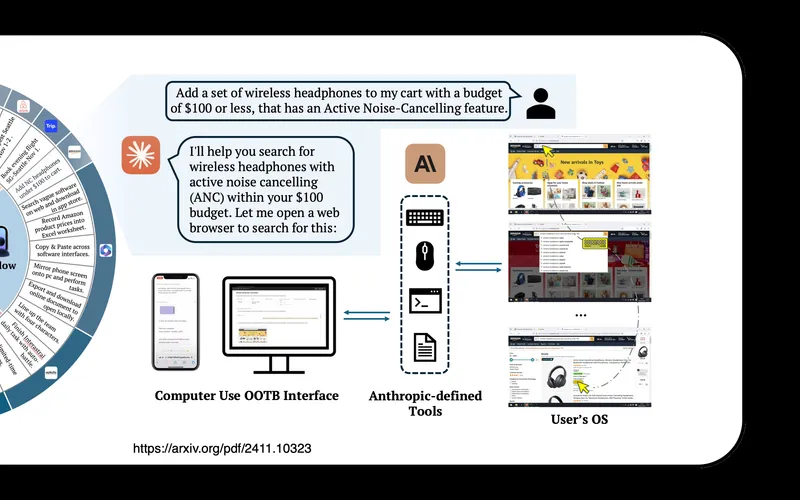

The key phrase is agentic coding. That means the model is supposed to handle multi-step work like planning, writing code, checking errors, revising, and continuing until a task is actually done. Older AI coding tools often look smart for one prompt, then fall apart when a project gets messy. The whole point of this release is to push beyond one-shot answers and into longer software tasks.

The other key phrase is open to all. Whether that means open weights, broad public access, or fewer restrictions than top closed models, the message is the same: they want this to be used widely, not treated like a tightly gated premium lab demo.

What happened

Qwen positioned this model as a coding specialist in the middle of a very competitive AI coding race. Right now, everyone is fighting to become the default engine behind AI dev tools: chat assistants, IDE copilots, code review bots, debugging agents, and autonomous coding pipelines.

A model name like “35B-A3B” usually signals architecture and efficiency choices. You don’t need to care about the exact internals to understand the practical point: vendors are trying to deliver stronger coding performance at lower runtime cost so developers can use these systems continuously, not just occasionally.

That cost-performance balance matters a lot. A model can be brilliant, but if it is too expensive or too slow for daily use, teams won’t deploy it deeply. Releases like this are about making coding AI useful enough, cheap enough, and available enough to move from experiments into routine engineering work.

Why this matters

This matters because software development is shifting from “AI helps me write snippets” to “AI helps me complete chunks of work.” That is a huge difference.

In the old pattern, a developer asks for a function, copies it, fixes it, and moves on. In the newer agentic pattern, the model can do sequences: read a task, inspect files, propose changes, run tests, fix failing tests, and summarize what changed. When that loop works reliably, teams ship faster.

It also matters because broader access increases competition. If capable coding models are not locked inside one or two premium ecosystems, startups and open-source teams can build better tools without massive AI budgets. That puts pressure on pricing and speeds up innovation across the board.

For businesses, this can reduce development bottlenecks. A small team can prototype faster, automate repetitive coding tasks, and spend more time on product decisions instead of boilerplate. For enterprises, it creates optionality: they can mix vendors, host where needed, and avoid single-provider lock-in.

What this means for regular people

Even if you never write code, this affects your daily life. Better coding models usually lead to faster product updates, quicker bug fixes, and more software features released by smaller teams. That means apps improve faster, but it can also mean more rapid UI changes and occasional instability as teams ship more aggressively.

You may also notice more “AI-native” products from small companies that previously couldn’t afford large engineering teams. If coding and debugging get cheaper, more people can build useful software. That is generally good for consumers because it increases choice.

At work, this trend means many non-engineering roles will collaborate with coding agents indirectly. Product managers, analysts, operations leads, and marketers may increasingly describe workflows in plain language and have AI-powered systems generate internal tools or automations. The line between “technical” and “non-technical” work keeps blurring.

The reality check

There are real limits. “Agentic coding” does not mean “always correct.” Models still make subtle mistakes, especially around security, edge cases, and architecture decisions. A system that can produce lots of code quickly can also produce lots of technical debt quickly if no one reviews it properly.

So the practical rule is simple: use AI to accelerate implementation, but keep humans accountable for correctness and risk. Strong teams will use these models with tests, code review gates, and clear ownership. Weak teams will over-automate and pay for it later in outages and rewrites.

Another reality: open access can be a double-edged sword. Wider availability helps legitimate builders, but it can also lower barriers for abuse. Expect ongoing debates about safeguards, licensing terms, and responsible deployment.

Bottom line

The Qwen3.6-35B-A3B announcement is part of a bigger shift in AI: coding models are becoming less like chatbots and more like autonomous work engines. What happened is straightforward: Qwen launched a coding-focused model and emphasized broad accessibility. Why it matters is competition, cost, and real workflow utility. What it means for regular people is faster software cycles, more tools from smaller teams, and a world where AI increasingly helps build the digital products you use every day.

If you want the shortest takeaway: this is another step toward AI that doesn’t just suggest code, but helps get real software shipped.

Now you know more than 99% of people. — Sara Plaintext