OK so here’s what’s actually going on with Claude Opus 4.7 vs 4.6.

Anthropic basically dropped a “.1” version number and snuck in a full brain transplant.

Everyone’s arguing about @OpenAI GPT-5.4 and Google Gemini 3.1 Pro, and Anthropic just pulled the “actually we’re ahead on the hard stuff now” move.

1. Coding: Opus 4.7 is now the sweatiest try-hard in the room

On paper: Opus 4.7 now beats GPT-5.4 and Gemini 3.1 Pro on agentic coding benchmarks.

Translation to normal life: this isn’t “write a LeetCode function” land. This is “here’s a messy repo, a vague Jira ticket, three tools, and a dream — go ship the feature.”

“Agentic coding” = the model doesn’t just spit a function, it:

- Calls tools (like a CLI, browser, or test runner)

- Reads existing code and config instead of hallucinating

- Iterates when something breaks instead of silently dying

On those benchmarks, Anthropic is flexing: Opus 4.7 is now top of the pile against the current OpenAI / Google flagships.

If 4.6 felt like a sharp junior dev, 4.7 is more like that mid-level engineer who can finally own a feature end-to-end without you hovering in VS Code Live Share.

So if you’re doing:

- Big refactors (multi-file changes, framework upgrades)

- Agent-style coding (auto-PR bots, CI helpers, internal dev copilots)

- Complex tool-using workflows (SQL + API calls + tests)

4.7 is a legit upgrade. This is where the “beats GPT-5.4 + Gemini 3.1 Pro” actually shows up in real work.

2. Vision: 3x bigger images means screenshots finally matter

Old Claude: anything beyond small-ish images and it’s like “uhh I see… some pixels? maybe a box?”

New Claude Opus 4.7: supports images up to 2,576 pixels on the long edge. That’s 3x the old limit.

This is way more important than it sounds.

Because now you can actually throw it:

- Full page app screenshots (with readable text and layout)

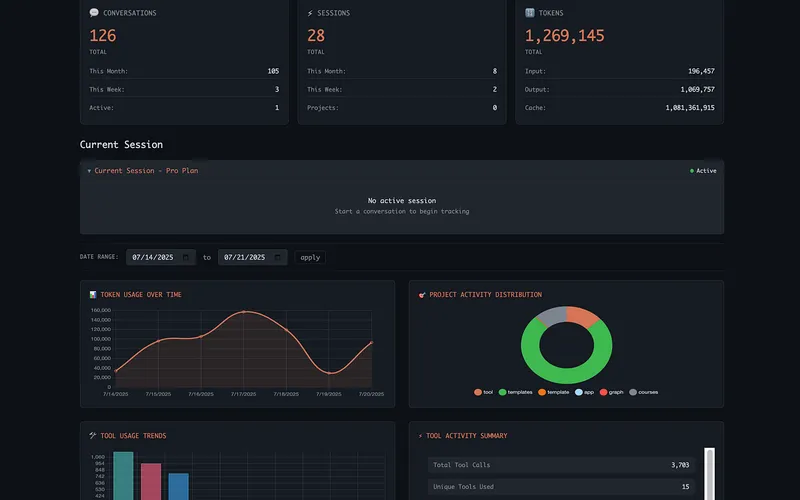

- Complicated dashboards (Grafana, Datadog, Looker, whatever)

- Technical diagrams (cloud architecture, system block diagrams, UML)

- Dense slides from that one coworker who hates white space

Instead of “guessing what that tiny blurry text says,” it can actually read it and reason about it.

This means real workflows like:

- “Here’s a screenshot of our 500 error in production, tell me the likely root cause.”

- “Here’s a network diagram, what’s wrong with this security model?”

- “Here’s the Figma screenshot, generate Tailwind code for this layout.”

With 4.6, a lot of that was vibes. With 4.7, the pixels are finally big enough that vision is actually usable for serious stuff, not just meme classification.

3. The tokenizer got “honest” (and your bill might notice)

This one’s sneaky but huge.

Claude Opus 4.7 uses a new tokenizer that counts tokens 1.0–1.35x higher than 4.6 for the same text.

So you paste the exact same prompt into 4.7 and 4.6, and 4.7 might say “that’s 30% more tokens, thanks.”

Why? Because the new tokenizer is more precise / aligned with the real structure of language, but… the meter is still running.

Combine that with this fun detail: at higher effort levels, Opus 4.7 tends to “think harder” and output more tokens than 4.6.

Double whammy:

- Inputs might cost up to 35% more tokens

- Outputs can be longer if you crank the effort

Pricing per million tokens did not change (still $5 input / $25 output), but:

If you switch 4.6 → 4.7 and change nothing else, your monthly bill can still go up.

So if you’re cost-sensitive:

- Monitor token usage for a week after switching

- Shorten boilerplate prompts (system messages, instructions)

- Use lower effort levels by default and reserve xhigh/max for hard tasks

4. New “xhigh” effort level: the medium-well steak setting

Anthropic already had effort levels (low / medium / high / max) controlling how hard the model “thinks” vs how long it takes.

Opus 4.7 adds a new tier: xhigh, sitting between “high” and “max.”

Use this mental model:

- High: smart enough for most coding, writing, planning

- Max: “bring every neuron you’ve got, I don’t care how long it takes”

- xhigh: “this is serious, but not ‘wait 2x longer and pay 2x more’ serious”

Where xhigh makes sense:

- Hard algorithm / math tasks that aren’t mission-critical

- Non-trivial architecture and system design reviews

- Complex financial or legal reasoning where you want fewer dumb mistakes

It’s like telling the model: “Do a deep think, but don’t write a dissertation.”

If you were scared to use “max” because of latency and cost, xhigh is the new sweet spot for hard-but-not-insane problems.

5. /ultrareview in Claude Code: fake senior engineer, real value

Inside Claude Code, Opus 4.7 gets a new superpower: the /ultrareview command.

This is Anthropic’s attempt to simulate a senior human code reviewer.

Not just “style nit” comments, but:

- Subtle design flaws (“This abstraction will bite you when you add multi-tenant support.”)

- Logic gaps (“You handle retries here, but not in the background worker path.”)

- Hidden risks (“This looks thread-safe but actually isn’t.”)

If you’re solo building, it’s like getting a decent staff engineer to sanity-check your PRs at 3 a.m. for free.

Compared to 4.6, which could review code but wasn’t tuned like this, 4.7 + /ultrareview is much more “design critique” and less “inline spellcheck.”

6. Cybersecurity: guardrails with a lawyer and a bouncer

Here’s the spicy one.

Claude Opus 4.7 is the first model with automated systems specifically to detect and block prohibited cybersecurity requests.

So if you try:

- “Explain how to exploit this zero-day in detail”

- “Generate payloads to bypass this specific WAF”

It’s much more likely to hard-block you than 4.6.

But to not completely nuke security research, Anthropic is pairing this with a Cyber Verification Program for legit pentesters / vuln researchers, so vetted people can still do real work without getting stonewalled.

Net effect vs 4.6:

- Safer by default for enterprises and cloud providers

- More friction for “gray area” hacking prompts if you’re not verified

If you’re a normal dev just asking “how do I secure X” or “what’s wrong with this config,” you’ll be fine. The guardrails are aimed at offensive misuse, not basic security help.

7. Pricing: same sticker, different gas mileage

On the surface, pricing is unchanged:

- $5 per million input tokens

- $25 per million output tokens

Same as Opus 4.6.

But remember the two gotchas:

- New tokenizer → 1.0–1.35x more input tokens counted

- Higher effort levels → more verbose outputs

So yes, the sticker price is steady, but Opus 4.7 can absolutely be a stealth price increase if you don’t watch volume.

Anthropic’s basically saying: “We made it better, and we’re not raising list prices. But you might use more of it.”

8. The “Mythos” elephant in the room

Anthropic openly admits: Opus 4.7 is not Mythos.

Mythos is their unreleased god-tier model they keep teasing.

So 4.7 is “best you can actually buy right now,” not “peak Anthropic IQ in the lab.”

Still: it’s currently their most capable generally available model and beats the other guys on some of the hardest public benchmarks (agentic coding, tool use, computer use, financial analysis).

So… should you upgrade today?

My blunt take:

- If you do serious coding, agent workflows, or technical diagram/screenshot stuff: yes, upgrade to Opus 4.7 now. The coding + vision bump alone is worth the token hit.

- If you’re mostly doing chat, copy, basic Q&A: you can chill. 4.6 is still plenty, and 4.7’s main wins won’t change your life there.

- If you’re super cost-sensitive at scale: test 4.7 on a subset of traffic first, measure token use for a week, and maybe set effort to “medium/high” by default with xhigh for special cases.

- If you’re in security / infra / enterprise: 4.7’s cybersecurity safeguards + safer agent behavior make it the better default choice going forward.

Verdict in one line: for builders, engineers, and anyone using Claude as an actual teammate instead of a toy, Opus 4.7 is the new default — just go in with eyes open on tokens.

Now you know more than 99% of people.

Now you know more than 99% of people. — Sara Plaintext