Claude 4.7’s Tokenizer Cost Story, in Plain English

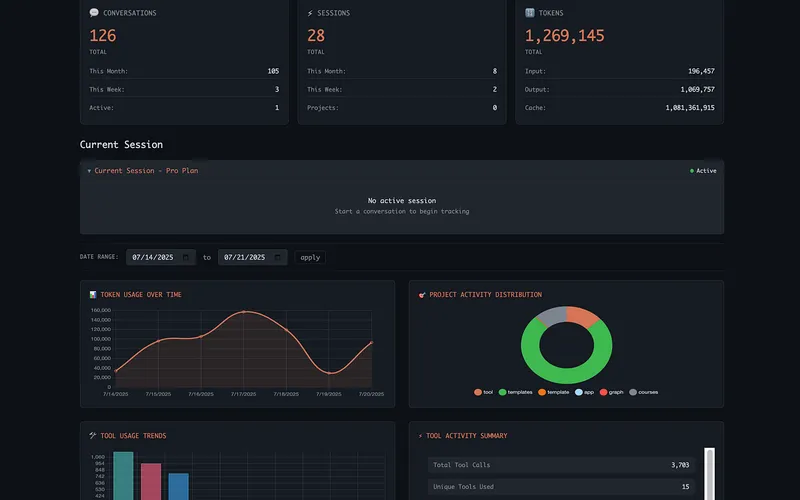

A technical write-up called “I Measured Claude 4.7’s New Tokenizer. Here’s What It Costs You.” is getting traction (518 likes/points and 351 retweets/comments) because it answers a question regular users actually care about: did Claude get more expensive to use in practice, even if the posted prices stayed the same?

Short answer: for a lot of English-heavy and code-heavy work, yes.

The author compared how Claude Opus 4.6 and 4.7 count tokens on the same exact text. Tokens are the units AI companies bill and rate-limit on. If the same message gets split into more tokens, you burn quota faster and can pay more for similar work.

What happened

Anthropic said Claude 4.7’s tokenizer would use roughly 1.0x to 1.35x as many tokens as 4.6. The independent test found that many real-world developer inputs land near the top of that range, and some go beyond it.

In seven “real Claude Code” samples (things like a CLAUDE.md file, prompt text, git logs, terminal output, stack traces, and code diffs), the weighted token ratio was about 1.325x. That means the same content became about 32.5% bigger in token terms.

Some examples were higher. A real CLAUDE.md sample came in around 1.445x. Technical-doc style text hit roughly 1.47x in synthetic testing. In other words, if you do a lot of technical writing and coding context, your usage can climb more than the headline average suggests.

The article also found that Chinese and Japanese text barely changed (around 1.01x in its tests), which implies the impact is not uniform across languages and content types.

Why this matters

Most people look at the model’s posted per-million-token price and assume their cost should be stable if pricing doesn’t change. But tokenizer changes can quietly move the goalposts.

Think of it like this: the gas price per gallon stayed the same, but your car now burns more gallons to drive the same route. You didn’t change the trip, but your bill still goes up.

That matters in two ways.

First, API spend. If your prompts and context are now larger in token count, long sessions cost more. The write-up modeled an 80-turn Claude Code session and estimated a jump from around $6.65 to roughly $7.86–$8.76, depending on output size. That’s about 20% to 30% more for a similar workflow.

Second, rate limits. Even if you are on a subscription plan and not paying per call, your quota windows can run out sooner because token budgets are still token budgets. Same work, faster drain.

What Anthropic may be trading for that extra cost

Anthropic’s claim is that 4.7 follows instructions more literally, especially when prompts are precise. The idea is that a tokenizer that breaks text into smaller pieces can sometimes help the model track exact wording and constraints better.

The author tried to test that with a small benchmark slice (IFEval-style constraint-following prompts). Result: a modest strict-following improvement, roughly +5 percentage points in that sample, while looser scoring stayed flat.

So the article’s conclusion is not “this is a scam” and not “this is revolutionary.” It’s more practical: you may be paying more tokens for a real but limited improvement in strict instruction behavior.

What this means for regular people

If you are a casual Claude user writing short prompts, you might barely notice this. Your usage is too light for tokenizer differences to become dramatic.

If you are a power user with long chats, big context, lots of copied docs, and coding sessions, this is highly relevant. You may hit limits earlier and wonder why your workflow feels “smaller” in the same time window.

If you run a business that budgets AI usage, you should not rely only on official range statements. You should test your own content mix. Legal text, docs, logs, specs, and code all tokenize differently.

If you manage a team, this story is a reminder to measure cost in real session behavior, not just sticker pricing. The unit economics of AI are now affected by model architecture choices most users never see.

The practical takeaway

Don’t panic, but do recalibrate.

If you moved from 4.6 to 4.7 and your usage feels more expensive or your limit windows feel shorter, you’re probably not imagining it. For many English/code workflows, token counts appear materially higher.

At the same time, if 4.7 gives you cleaner execution and fewer mistakes, that can still be worth it. Paying 20% to 30% more can be a good trade if it saves retries, manual fixes, and context rewrites.

The smart move is simple: run side-by-side tests on your own real prompts, logs, and docs for a week. Track cost per finished task, not cost per token in isolation.

That’s really the heart of this story. The posted price didn’t change, but the effective cost of getting work done may have. In 2026 AI usage, that distinction is everything.

Now you know more than 99% of people. — Sara Plaintext