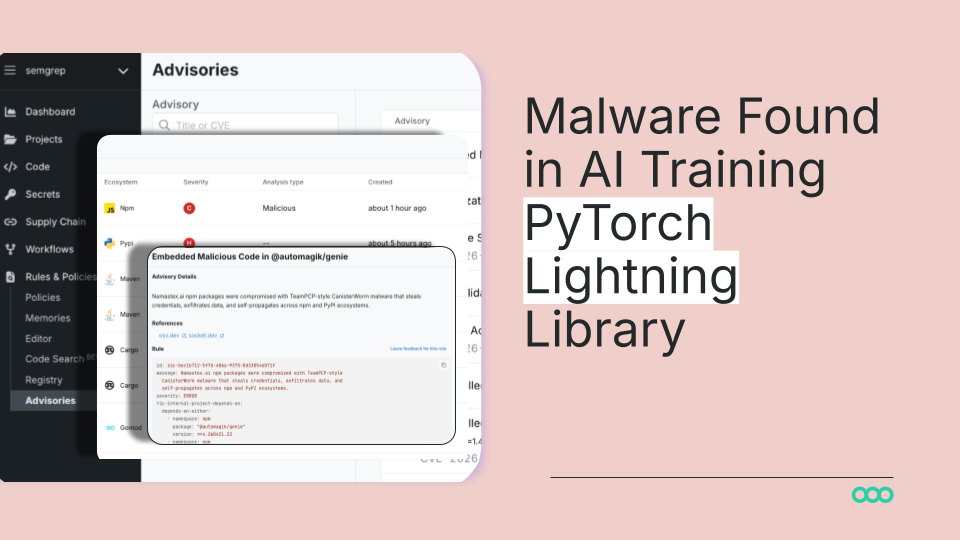

Malicious Code in PyTorch Lightning: What Happened and Why It Matters

What Happened

Security researchers discovered malicious code embedded in PyTorch Lightning, a critical AI training library used by thousands of machine learning engineers worldwide. The compromise appears to have occurred through either a compromised dependency or a hijacked maintainer account, allowing attackers to inject malware into the codebase. This malware, identified with a Shai-Hulud theme, could potentially execute arbitrary code on any system that installed the affected version of the library.

PyTorch Lightning serves as a standard abstraction layer built on top of PyTorch, one of the most popular deep learning frameworks. It simplifies the process of training machine learning models by handling boilerplate code and providing a clean interface for researchers and engineers. Because of its widespread adoption, compromising this single library creates a cascade effect across the entire AI development ecosystem.

The malware was discovered before widespread exploitation, but the timeline and scope of potential exposure remain critical questions. Any organization that pulled the compromised version into their development environment or production systems could have their infrastructure at risk.

Why This Matters: The Supply Chain Attack Problem

This incident represents a fundamental vulnerability in how modern software development works. Supply chain attacks on AI libraries are becoming the new frontier for cybercriminals and nation-state actors because they provide asymmetric leverage. Rather than targeting individual companies, attackers compromise a single widely-used tool and instantly gain access to thousands of victim systems simultaneously.

For AI and machine learning specifically, the stakes are particularly high. ML engineers often work with massive datasets, proprietary models, and sensitive training pipelines. A compromised training library doesn't just risk your code—it risks your intellectual property, your training data, and your computational infrastructure. An attacker with access to your training environment can steal model weights, exfiltrate training datasets, or use your hardware for cryptomining or launching further attacks.

PyTorch Lightning's position makes it especially dangerous. It's not an obscure package used by a handful of teams. It's embedded in the workflows of major tech companies, startups, research institutions, and enterprises across every industry. A single point of compromise affects the entire supply chain, from development through deployment.

This also highlights a broader trend: the attack surface for AI infrastructure is expanding faster than security practices can keep up. Most organizations audit their direct dependencies but rarely audit transitive dependencies or the entire dependency tree. Supply chain attacks exploit this blind spot ruthlessly.

The Mechanics: How This Happened

The most likely attack vector was either a compromised maintainer account or a vulnerability in a dependency that PyTorch Lightning itself relies on. Open source projects, while generally secure through transparency and community review, are vulnerable when maintainers use weak passwords, lack multi-factor authentication, or when popular packages accumulate dependencies managed by different people with varying security practices.

Once an attacker gained access, they could inject malicious code that executes during installation or when the library is imported. This code could phone home to attacker infrastructure, create backdoors, steal environment variables (which often contain API keys and credentials), or establish persistence on the infected system.

The insidious part: most developers don't review the full source code of every package they install. They trust that the maintainers have vetted the code and that the open source community would catch malicious additions. Both assumptions can fail.

What You Should Do Right Now

Immediate Actions: If your organization uses PyTorch Lightning, immediately check which versions you have installed across your development, testing, and production environments. Review the official PyTorch Lightning security advisories to identify which versions were compromised. Update to the patched version as soon as possible.

Dependency Auditing: Implement or strengthen your dependency auditing process. Use tools like SBOM (Software Bill of Materials) generators to map your entire dependency tree, not just direct dependencies. Tools like Snyk, Dependabot, and Black Duck can help automate vulnerability scanning and alert you when packages are compromised.

Code Review Practices: When updating critical libraries, particularly those in your ML pipeline, treat them like security updates. Review changelogs carefully. If possible, have security teams review the actual code changes in significant updates to your training infrastructure.

Environment Isolation: Isolate your ML training environments from your production systems. Use containerization, separate credentials, and network segmentation so that a compromise in your training pipeline doesn't automatically give attackers access to your live models or user-facing infrastructure.

Credential Rotation: If you believe your systems may have been affected, rotate all credentials, API keys, and secrets that could have been accessed from a compromised training environment. This includes cloud credentials, database passwords, and authentication tokens.

Monitoring and Detection: Implement monitoring to detect unusual network traffic, credential access, or system behavior in your ML infrastructure. Supply chain compromises often include telemetry or command-and-control communications that can be detected with proper logging.

The Bigger Picture

This incident is a wake-up call that AI infrastructure security is not just a technical problem—it's a business risk. As AI becomes more central to enterprise operations, attackers will continue targeting the libraries and frameworks that power ML development. Organizations that treat their ML supply chain with the same rigor as their application security will be significantly better protected.

The responsibility is shared: maintainers need stronger account security and automated scanning, platforms need better verification mechanisms, and organizations need to audit their dependencies as aggressively as they audit their code.

Now you know more than 99% of people. — Sara Plaintext