Twill.ai Just Dropped and I Have THOUGHTS

So Twill.ai launches out of YC S25 with a simple pitch: cloud agents that write your PRs for you. Delegate work, get back code. I checked it out and here's my scorecard: 7.5/10. Solid concept, real problem-solving, but the execution and market positioning feel like they're still figuring it out.

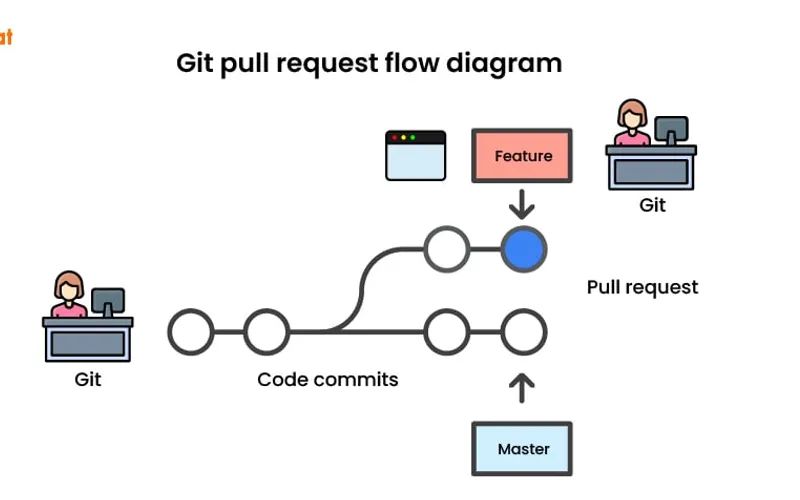

Let me be clear — the idea is good. Every engineer I know complains about PR review cycles, refactoring busywork, and repetitive code generation. Twill's saying "let agents handle it." That's not nothing. Compare this to GitHub Copilot (which is autocomplete on steroids) or Cursor (which is IDE-first code generation) — Twill's trying to go deeper. They're not just suggesting lines; they're orchestrating actual workflows and shipping PRs. That's ambitious.

But here's where it gets messy. 44 HN points and 46 comments? That's mid engagement for a YC launch. People are curious but not HYPED. The product feels like it's still in "prove-it-works" mode. Can agents actually understand your codebase context well enough to write production PRs? Or are we getting a bunch of rubber-stamp changes that senior engineers have to rewrite anyway? The vibe I'm getting is "promising prototype" not "game-changer." We've been burned before by "AI will write your code" startups.

Real talk: if Twill can actually reduce PR review time by 30-40% AND maintain code quality, they've got something. But that's the execution game, not the concept game. Right now they're betting on developer trust, and trust is EARNED, not launched. I'm watching this one. Could be the next big DevTools play, or it could be another "AI agent that mostly hallucinated bad refactors." Time will tell. Stay sharp.

Stay sharp. — Max Signal