Introducing Project Glasswing: an urgent initiative to help secure the world’s most critical software.

— Anthropic (@AnthropicAI) April 7, 2026

It’s powered by our newest frontier model, Claude Mythos Preview, which can find software vulnerabilities better than all but the most skilled humans.https://t.co/NQ7IfEtYk7

What you’re seeing here is less “new chatbot feature” and more “AI lab entering serious cybersecurity territory.” Anthropic is saying its new model can find software vulnerabilities at near top-human level, and it’s wrapping that capability into a dedicated security initiative.

-

This is a shift from consumer AI hype to infrastructure defense.

Project Glasswing is positioned around securing critical software, which usually means systems where failure has big downstream impact: hospitals, finance stacks, cloud infrastructure, enterprise software, and public-sector tech. So this is about reducing high-impact bugs before attackers find them.

-

Why this matters: vulnerability discovery is becoming machine-accelerated.

If a model can consistently spot subtle security flaws, defensive teams can audit more code, faster. That could shorten the time between “bug introduced” and “bug fixed,” which is one of the most important security metrics in practice.

A statement from Anthropic CEO, Dario Amodei, on our discussions with the Department of War.https://t.co/rM77LJejuk

— Anthropic (@AnthropicAI) February 26, 2026This broader context matters because labs are increasingly pairing capability claims with security posture messaging. They know powerful models can be dual-use.

-

Why regular people should care: safer software means fewer invisible disasters.

Most people never read CVEs, but everyone feels breaches, outages, stolen accounts, and ransomware fallout. Better defensive tooling can mean fewer incidents that interrupt banking, healthcare portals, employer systems, or apps you rely on daily.

A statement on the comments from Secretary of War Pete Hegseth. https://t.co/Gg7Zb09IMR

— Anthropic (@AnthropicAI) February 28, 2026The point isn’t that AI will “solve security.” It’s that it can give defenders leverage in a fight where attackers have usually moved faster.

-

The catch: this power has to be controlled carefully.

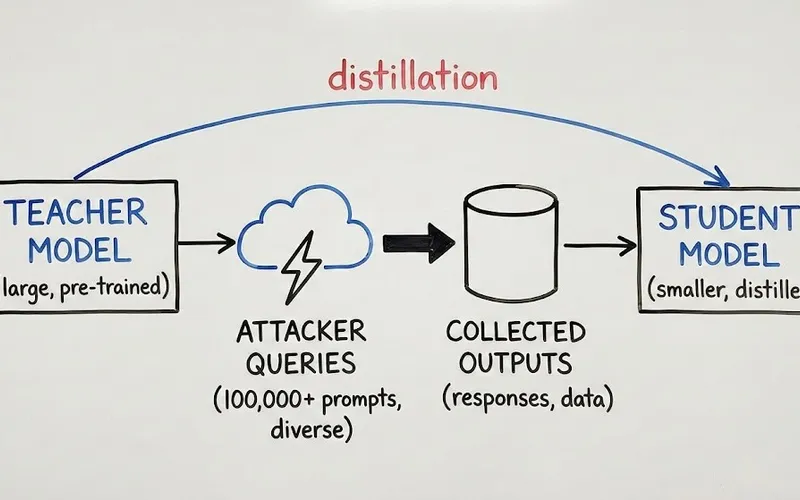

A model that can find serious vulnerabilities can be helpful for defenders and attractive to bad actors. So expect tighter access, staged rollouts, more monitoring, and stricter usage policies around advanced security-capable models.

Peter Steinberger is joining OpenAI to drive the next generation of personal agents. He is a genius with a lot of amazing ideas about the future of very smart agents interacting with each other to do very useful things for people. We expect this will quickly become core to our…

— Sam Altman (@sama) February 15, 2026Across the major labs, the pattern is clear: frontier AI is now treated as strategic infrastructure, not just productivity software.

-

What this means going forward: AI competition now includes “who can secure the stack.”

In the next phase, labs won’t just compete on chatbot quality. They’ll compete on trust, safeguards, and real-world defensive impact. For users and businesses, that means judging AI vendors not only by speed and creativity, but by security outcomes and accountability.

Bottom line: Project Glasswing is a signal that AI is moving deeper into cyber defense. You should care because if this works, the software you use every day may become harder to break, but only if these capabilities are deployed with strong guardrails.

Now you know more than 99% of people. — Sara Plaintext