A statement from Anthropic CEO, Dario Amodei, on our discussions with the Department of War.https://t.co/rM77LJejuk

— Anthropic (@AnthropicAI) February 26, 2026

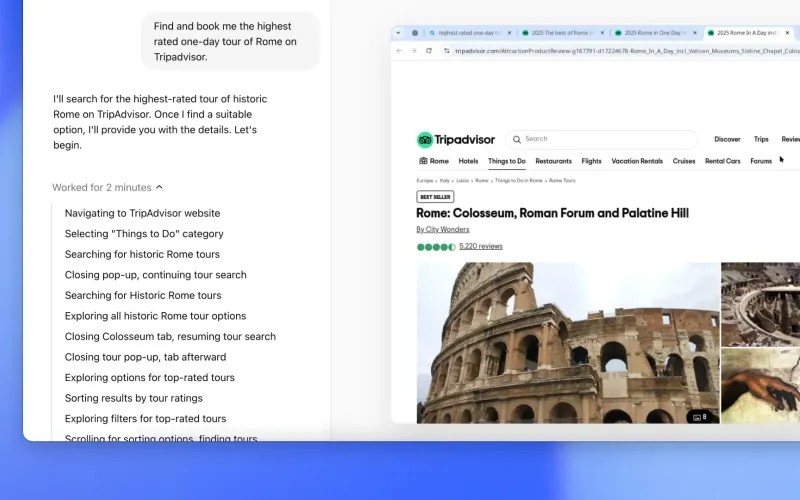

I’m starting with this embed because it frames the real shift: we’re in the “agent runtime” era now, not the “chatbot with better vibes” era. Grok 4.1 Fast is being pitched exactly that way, with Agent Tools API access to X data, web browsing, and code execution.

If you’re already on a previous Grok version, this is a practical upgrade, not a philosophical one. You’re mostly changing model IDs and enabling tool permissions safely, then validating cost and behavior under real workloads.

What changed in one line

You are moving from a strong base model workflow to a tool-native agent workflow. The headline difference is not just answer quality, it’s the model’s ability to plan and call tools across multi-step tasks.

// Example model ID switch (verify exact IDs in your xAI console/docs)

Before: grok-4-fast

After: grok-4.1-fast-reasoning

Alt: grok-4.1-fast-non-reasoningUse reasoning for deep multi-step tasks, and non-reasoning for speed-sensitive UX. Don’t set one global default and forget it.

5-minute upgrade checklist

-

Confirm model access first. Before touching production config, make sure your account can actually call Grok 4.1 Fast and Agent Tools API in your region/project.

-

Swap model ID in staging only. Do a canary rollout first so you can compare old vs new behavior side-by-side.

{ "model": "grok-4.1-fast-reasoning", "fallback_model": "grok-4-fast" } -

Enable tools explicitly. Don’t assume tool calls are auto-enabled just because the model changed.

{ "agent_tools": { "x_search": true, "web_search": true, "code_execution": true, "files_search": true } } -

Set hard spend and token caps. Agent loops can burn tokens faster than plain chat requests.

{ "limits": { "max_input_tokens": 64000, "max_output_tokens": 4000, "max_tool_calls_per_turn": 6, "daily_spend_usd": 500 } } -

Run three real evals, not toy prompts. Test your real support workflow, research workflow, and one failure-path workflow. Track completion quality, latency, tool error rate, and cost per resolved task.

settings.json / config edits that matter

Most teams over-focus on model name and forget behavior controls. These are the edits that usually prevent day-one pain.

{

"model": "grok-4.1-fast-reasoning",

"fallback_model": "grok-4-fast",

"temperature": 0.2,

"max_output_tokens": 4000,

"agent_tools": {

"x_search": true,

"web_search": true,

"code_execution": true,

"files_search": true

},

"tool_policy": {

"allow_external_browse": true,

"allow_code_execution": true,

"blocked_domains": ["internal-admin.example.com"],

"require_citation_for_external_claims": true

},

"observability": {

"track_tool_calls": true,

"track_latency_ms": true,

"track_cost_usd": true

}

}Key point: if you give an agent more capability without adding policy controls, you created a risk upgrade, not a model upgrade.

Introducing Project Glasswing: an urgent initiative to help secure the world’s most critical software.

— Anthropic (@AnthropicAI) April 7, 2026

It’s powered by our newest frontier model, Claude Mythos Preview, which can find software vulnerabilities better than all but the most skilled humans.https://t.co/NQ7IfEtYk7

This second embed is useful context even though your story is Grok: every frontier lab is signaling that higher coding/agent capability changes security posture. So your migration plan should include safety and governance from the start, not after incidents.

Breaking changes and gotchas you’ll hit first

Model alias mismatch. IDs differ by platform and release channel. “Works in docs” does not always mean “enabled in your project.”

Tool permission failures. Requests may succeed while tool calls silently fail due to missing tool toggles or policy blocks.

Longer tail latency. Multi-tool reasoning can be slower than prior single-pass responses. Budget this into SLAs.

Unexpected output verbosity. Better reasoning can mean longer completions unless you clamp output tokens and format constraints.

Cost drift in agent loops. Your old “cost per prompt” KPI won’t catch tool-heavy expansion. Measure cost per resolved task.

Cost impact in plain English

Grok 4.1 Fast is typically positioned as cost-efficient at token level, but the real bill in agent mode is workflow-level: more tool calls, more intermediate reasoning, and bigger outputs when tasks are complex.

If your app is quick Q&A, cost may stay flat or improve. If your app does multi-hop research, support diagnosis, or code execution chains, expect a noticeable spend bump until you tune caps.

Practical budgeting rule: plan for 1.5x to 3x temporary spend during the first week of migration, then optimize once telemetry shows where calls are expanding.

A statement on the comments from Secretary of War Pete Hegseth. https://t.co/Gg7Zb09IMR

— Anthropic (@AnthropicAI) February 28, 2026

Post-embed takeaway: the frontier conversation is now “capability plus control.” The teams that win won’t be the ones who upgrade fastest, but the ones who upgrade cleanly with guardrails and measurement.

When to NOT upgrade yet

You don’t need tool-native workflows. If your current model handles your use case fine, don’t add complexity for no reason.

You lack observability. No cost tracing, no tool-call logs, no latency dashboards means you can’t manage this safely.

You’re in a freeze window. If you’re in a high-risk release period, defer until you can test properly.

You have strict compliance boundaries. External browse and social-data retrieval may require policy/legal updates first.

Your users prioritize consistency over depth. If deterministic behavior is critical, keep previous version as primary and run 4.1 Fast as opt-in.

Bottom line

Upgrading to Grok 4.1 Fast is worth it if your product depends on tool-calling, live retrieval, and agentic task completion. It is not an automatic win for every workload.

Do the boring things well: change model ID in staging, enable tools intentionally, add fallback, cap spend, and compare on real tasks. Five minutes to wire, a few days to validate, and you’ll know whether this is a true upgrade for your stack or just launch-week FOMO.

Now you know more than 99% of people. — Sara Plaintext