A statement from Anthropic CEO, Dario Amodei, on our discussions with the Department of War.https://t.co/rM77LJejuk

— Anthropic (@AnthropicAI) February 26, 2026

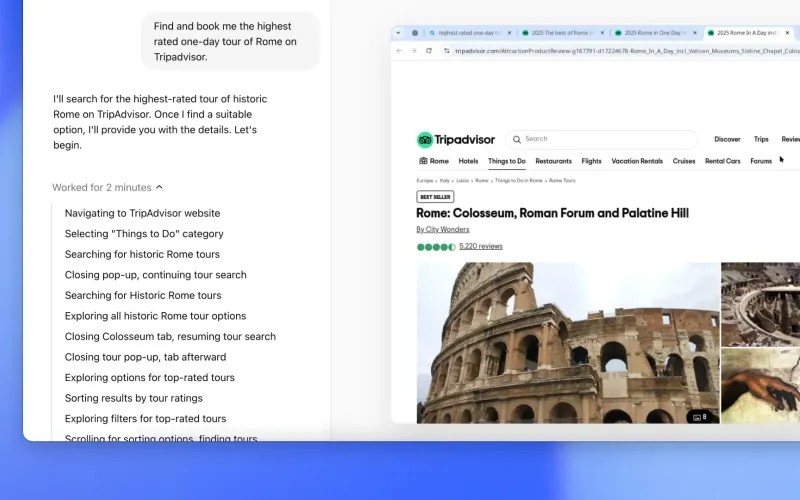

I’m starting with this embed because it captures the new reality: frontier model competition is now about agent performance with tools, not just chat quality. That’s exactly where Grok 4.1 Fast is positioned, with Agent Tools API access to X data, web browsing, and code execution.

Here’s the practical setup guide for builders who want Grok 4.1 Fast wired into everyday dev workflows. I’m using concrete config edits you can copy, plus the caveats that save you from wasting a day on “why doesn’t this model show up?”

Before you touch any tool

Do these three things once and you avoid 80% of migration pain. First, confirm your xAI account has Grok 4.1 Fast enabled. Second, decide whether you want reasoning or non-reasoning as default. Third, set a fallback model so your team isn’t blocked if availability or tool calls fail.

{

"primary_model": "grok-4.1-fast-reasoning",

"fallback_model": "grok-4-fast",

"max_output_tokens": 4000,

"temperature": 0.2

}Use reasoning for deep multi-step tasks, and non-reasoning for fast UX paths.

Claude Code

Claude Code is model-agnostic if you route through an OpenAI-compatible endpoint or provider layer. The exact change is switching model ID plus base URL and API key environment variable to xAI (or your gateway).

// .env

OPENAI_API_KEY=your_xai_or_gateway_key

OPENAI_BASE_URL=https://api.x.ai/v1

// settings.json (or equivalent Claude Code config)

{

"model": "grok-4.1-fast-reasoning",

"fallbackModel": "grok-4-fast",

"maxOutputTokens": 4000,

"temperature": 0.2

}If your current setup hardcodes an Anthropic endpoint, this is the one change that matters: point the client to the xAI-compatible endpoint and update model ID. Keep your old model as fallback for one sprint.

Cursor

In Cursor, the fastest path is adding a custom OpenAI-compatible provider, then changing the default model. If you already have custom providers enabled, this is a two-line swap.

// cursor settings.json

{

"cursor.openAI.baseURL": "https://api.x.ai/v1",

"cursor.openAI.apiKey": "${XAI_API_KEY}",

"cursor.aiModel": "grok-4.1-fast-reasoning",

"cursor.fallbackModel": "grok-4-fast",

"cursor.maxOutputTokens": 4000

}Gotcha: if Grok does not appear in the model picker, don’t debug Cursor first. Verify account entitlement and endpoint compatibility first.

Zed

Zed’s assistant config is JSON-driven, so migration is straightforward: switch provider endpoint and model line. Keep tool-heavy prompts in a separate profile if your team wants lower-cost defaults for routine edits.

// ~/.config/zed/settings.json (example)

{

"assistant": {

"provider": "openai_compatible",

"base_url": "https://api.x.ai/v1",

"api_key_env": "XAI_API_KEY",

"model": "grok-4.1-fast-reasoning",

"fallback_model": "grok-4-fast",

"temperature": 0.2,

"max_output_tokens": 4000

}

}If your org uses a proxy gateway, keep this exact shape and only swap base_url and key env variable.

Introducing Project Glasswing: an urgent initiative to help secure the world’s most critical software.

— Anthropic (@AnthropicAI) April 7, 2026

It’s powered by our newest frontier model, Claude Mythos Preview, which can find software vulnerabilities better than all but the most skilled humans.https://t.co/NQ7IfEtYk7

This embed is a useful reminder: stronger coding/agent models also raise security stakes. So don’t just “turn it on.” Add policy controls for browsing and execution from day one.

xAI API (direct)

This is the cleanest integration and where you should validate behavior before editor rollout. You can call Grok 4.1 Fast directly and enable tool usage in one request envelope.

curl https://api.x.ai/v1/chat/completions \

-H "Authorization: Bearer $XAI_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "grok-4.1-fast-reasoning",

"messages": [

{"role":"user","content":"Audit this repo plan and identify likely security risks."}

],

"temperature": 0.2,

"max_tokens": 4000,

"tools": [

{"type":"web_search"},

{"type":"x_search"},

{"type":"code_execution"}

]

}'Exact tool names can vary by API version, so match the latest xAI docs. The key config change is model plus tools enabled explicitly.

Bedrock

Important: Grok 4.1 Fast is not a native Bedrock model family at the time of this guide. So the exact setup is a bridge pattern, not a native modelId swap.

// What does NOT work natively:

{

"modelId": "xai.grok-4.1-fast"

}

// What to do instead:

{

"llm_router": {

"provider": "xai",

"base_url": "https://api.x.ai/v1",

"model": "grok-4.1-fast-reasoning"

},

"bedrock_apps_call_router": true

}If your app is Bedrock-native, keep Bedrock for orchestration/infra and route LLM calls for Grok through your model router. Do not burn time hunting a Bedrock model ID that doesn’t exist in your account catalog.

Vertex AI

Same story as Bedrock: no standard native Grok publisher model path in Vertex for most teams right now. Use a gateway or service wrapper that forwards to xAI.

// What teams expect (usually unavailable):

"model": "publishers/xai/models/grok-4.1-fast"

// Practical config:

{

"modelGateway": {

"target": "xai",

"baseUrl": "https://api.x.ai/v1",

"apiKeySecret": "projects/PROJECT_ID/secrets/XAI_API_KEY",

"model": "grok-4.1-fast-reasoning",

"fallbackModel": "grok-4-fast"

}

}If your platform mandates Vertex endpoints only, deploy a thin internal adapter service and point Vertex callers to that adapter.

A statement on the comments from Secretary of War Pete Hegseth. https://t.co/Gg7Zb09IMR

— Anthropic (@AnthropicAI) February 28, 2026

Post-embed takeaway: every lab is converging on “agentic capability + operational risk.” Your integration quality matters more than launch-week hype.

Final rollout pattern that works

Start with direct API validation, then editor integrations, then team-wide default. Keep fallback on for at least two weeks. Track cost per resolved task, not just token spend, because tool-calling can multiply workflow cost even when per-token pricing looks cheap.

If you do one thing right, make it this config discipline: explicit model ID, explicit endpoint, explicit tool permissions, explicit fallback. That’s how you get Grok 4.1 Fast’s upside without turning your dev stack into a brittle demo.

Now you know more than 99% of people. — Sara Plaintext