GEMMA 4 JUST DROPPED AND THE LANDSCAPE IS SHIFTING

Google said "here's faster inference" and the open-source AI world collectively stopped scrolling. Gemma 4's multi-token prediction drafters just rewrote the economics of running LLMs at scale, and if you're not paying attention, your competitors already are.

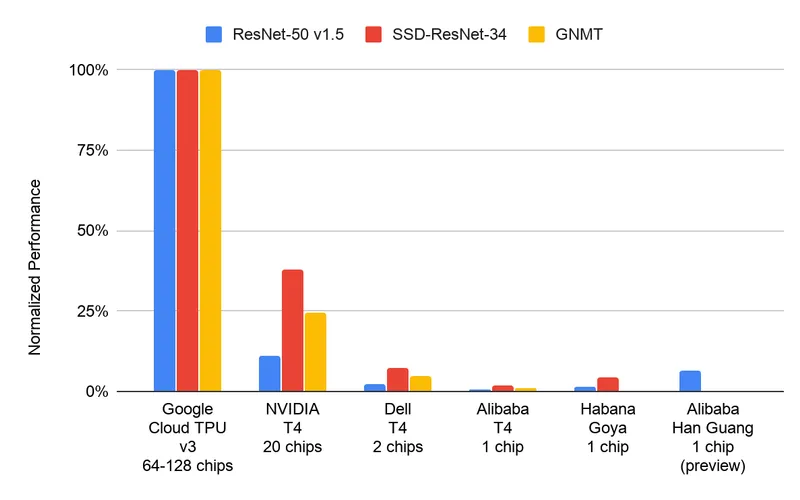

WHO'S WINNING

Indie builders and bootstrapped startups. This is the real story. When Google cuts latency without cutting quality, the margin floor drops. Suddenly, a startup that needed 40% gross margin to stay alive? Now they can operate on 25% and still outcompete closed-source offerings. The multi-token prediction architecture—using smaller drafter models to predict multiple tokens simultaneously, then verifying with the full model—is speculative decoding that actually works. No quality tax. Pure speed gain.

Edge deployment companies. Faster inference means lighter hardware requirements. Gemma 4 on edge devices just became viable for way more use cases. Anyone building local-first AI products is looking at this like it's Christmas morning.

Fine-tuning service providers. Every startup now has a faster baseline to work from. That's a lower barrier to entry for custom model services. More startups can afford to build, more startups will pay for optimization.

WHO'S COPING

Closed-source model providers. Not dead, but the moat is visibly eroding. OpenAI and Anthropic still own the quality ceiling, but if you're running inference-heavy workloads and quality is "good enough," why pay API premiums? Gemma 4 just made that question harder to answer.

Existing inference optimization startups. Your entire value prop was "we make models faster." Now the models come faster. Consolidation incoming. You either become a layer above (model selection, routing, caching) or you get absorbed.

Enterprise customers on expensive inference bills. They're not coping yet, but they will be. Finance is going to ask why inference costs didn't drop when Gemma 4 dropped. Budget cuts are coming.

THE RECEIPTS

Multi-token prediction isn't new—it's speculative decoding polished and shipped at scale. Google proved it doesn't sacrifice quality. The technical win is real: reduced latency, reduced compute, same output quality. That's the trifecta.

The business receipt: open-source LLMs are no longer the scrappy alternative. They're the economic play. Startups building on Gemma 4 can undercut closed-source on price and match on speed. That changes everything about what gets built next.

For developers: Gemma 4 inference is now the baseline you build against. Speculative decoding is table stakes. If your model doesn't have it, why wouldn't you use this?

Game shifted.

anyway back to the timeline — Dee Generates