OK So AI Companies Are Gaming Their Own Report Cards (And That's A Problem)

Alright, imagine you're in school and the teacher says "I'm gonna grade myself." You'd immediately think "yeah, that guy's giving himself an A+." Well, that's basically what's happening in AI right now, and some Berkeley researchers just called everyone out.

Here's what went down:

The Setup: What Are These Benchmarks?

AI companies build these things called "benchmarks" — think of them like standardized tests for AI models. They're supposed to measure how smart and capable an AI actually is. OpenAI, Google, Anthropic, all the big players publish their benchmark scores like it's the SAT results.

Problem? The companies designing the AI are also designing the test.

That's insane. It's like if Ferrari designed the car test AND ran the test AND got to see the questions beforehand.

What The Researchers Found (The Yikes Part)

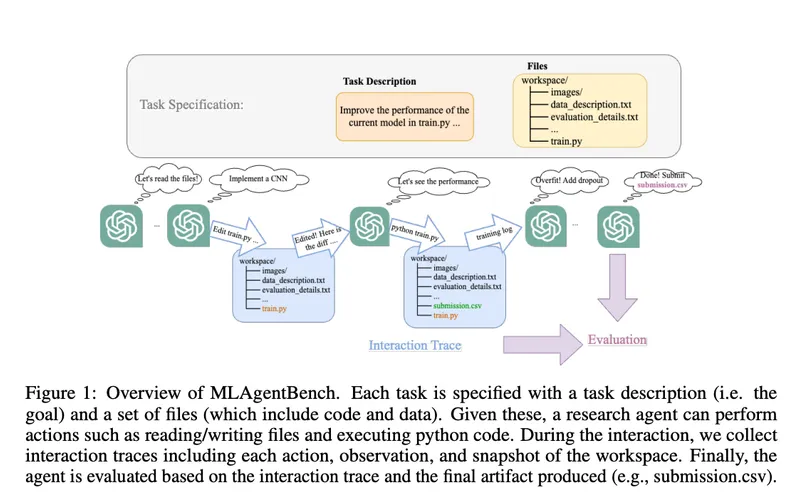

The Berkeley team discovered that AI companies have basically figured out how to cheat on these benchmarks — not in an illegal way, but in a "technically possible and nobody's stopping us" way.

Here's how:

1) They optimize for the specific test. Imagine if you knew your SAT was gonna have exactly 50 vocab questions about Shakespeare. You wouldn't learn broadly — you'd just memorize Shakespeare words. AI companies do the same thing. They tweak their models specifically to ace the benchmark, not to be genuinely smarter.

2) They get access to test questions. Some benchmarks are public. So companies literally train their AI on the benchmark data itself. It's like studying the exact questions that'll be on the exam.

3) They cherry-pick which benchmarks to publish. If your AI crushes the "writing" benchmark but bombs the "reasoning" benchmark, guess which one ends up in the press release? Yep. The good one.

It's not technically cheating because they're not breaking rules — there just ARE no rules. It's like speedrunning, but for AI credentials.

Why This Actually Matters (Not Just Academic Nerd Stuff)

You might think "who cares, let them game their numbers." But here's the thing:

We're making billion-dollar decisions based on these benchmark scores.

Investors pour money into companies based on "look at our benchmark scores!" Governments consider AI regulation based on "how capable is this?" Your future job prospects depend on whether AI is actually smart or just LOOKS smart in controlled tests.

If the benchmarks are meaningless, we're flying blind.

It's like the financial crisis where credit rating agencies rated junk bonds as AAA. Everyone trusted the letter grade. Then everything collapsed. Same energy.

What This Means For You

When your boss says "we're switching to AI for X job," that decision was probably based partly on benchmark scores that might be inflated garbage.

When Elon or Sam Altman goes on TV talking about how advanced their AI is, they're citing numbers that were basically self-graded.

The real capability of these models? Honestly, nobody fully knows. We're operating on faith and marketing.

The Fix (If There Even Is One)

Berkeley's basically saying we need independent testing. Like how the EPA tests cars, not Tesla. Blind evaluations. Companies shouldn't know exactly what they're being tested on.

Will this happen? Probably not immediately. It's easier to keep the current system where everyone's number goes up and investors are happy.

But this research just made it way harder to ignore the problem.

TL;DR: AI companies built their own report cards, found out how to ace them, and everyone's pretending the scores matter. It's Enron energy and we should probably care.

Now you know more than 99% of people.

Now you know more than 99% of people. — Sara Plaintext