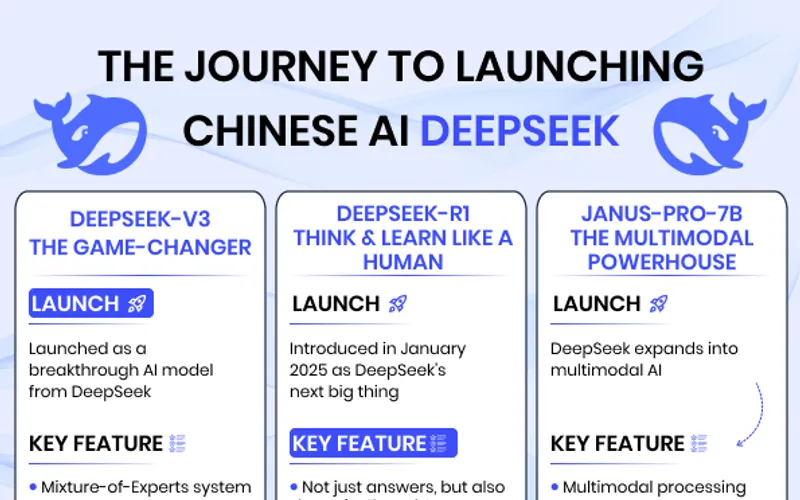

DeepSeek v4 is not just another model launch; it is a tooling and economics event. The reason builders care is simple: you can now get competitive capability with much lower inference cost in many workloads, and the integration friction is relatively low because DeepSeek supports OpenAI-compatible and Anthropic-compatible formats. If your startup margin depends on token costs, this is a “change your routing this week” moment.

This setup guide shows how to wire DeepSeek v4 across the major dev surfaces: Claude Code, Cursor, Zed, direct API, Bedrock-style routing, and Vertex-style orchestration. The snippets are intentionally practical so you can copy, adapt, and ship.

Before all tools: model IDs and base URLs

DeepSeek v4 introduces two main model targets:

{

"models": {

"throughput_default": "deepseek-v4-flash",

"high_capability": "deepseek-v4-pro"

},

"base_urls": {

"openai_compatible": "https://api.deepseek.com",

"anthropic_compatible": "https://api.deepseek.com/anthropic"

}

}Use deepseek-v4-flash for broad traffic and deepseek-v4-pro for high-value complex tasks. Keep a rollback route to your current production model until metrics prove the switch.

Claude Code

In Claude Code, add DeepSeek as a provider profile and split default vs deep-work profiles. This gives fast migration without forcing every task onto pro-level cost.

{

"providers": {

"deepseek": {

"type": "openai-compatible",

"baseUrl": "https://api.deepseek.com",

"apiKeyEnv": "DEEPSEEK_API_KEY"

}

},

"profiles": {

"default": {

"provider": "deepseek",

"model": "deepseek-v4-flash"

},

"complex-agent": {

"provider": "deepseek",

"model": "deepseek-v4-pro"

},

"rollback": {

"provider": "openai",

"model": "gpt-5.4"

}

}

}Exact change: set your default Claude Code profile model to deepseek-v4-flash and add a separate complex-agent profile for deepseek-v4-pro.

Cursor

Cursor should be configured with model overrides by task type. If you set everything to v4-pro, you erase the cost-efficiency advantage.

{

"cursor.ai.provider": "openai-compatible",

"cursor.ai.baseUrl": "https://api.deepseek.com",

"cursor.ai.apiKeyEnv": "DEEPSEEK_API_KEY",

"cursor.ai.defaultModel": "deepseek-v4-flash",

"cursor.ai.modelOverrides": {

"multi_file_refactor": "deepseek-v4-pro",

"complex_debugging": "deepseek-v4-pro",

"quick_edits": "deepseek-v4-flash"

},

"cursor.ai.maxOutputTokens": 8192

}Exact change: switch base URL to DeepSeek and map high-complexity tasks to deepseek-v4-pro, leaving low-risk tasks on flash.

Zed

Zed migration is easiest with profile-based separation. Keep “everyday” and “deep-work” profiles so teams can choose capability vs cost intentionally.

{

"assistant": {

"provider": "openai-compatible",

"base_url": "https://api.deepseek.com",

"api_key_env": "DEEPSEEK_API_KEY",

"default_model": "deepseek-v4-flash",

"profiles": {

"everyday": {

"model": "deepseek-v4-flash"

},

"deep-work": {

"model": "deepseek-v4-pro"

}

}

}

}Exact change: set assistant.default_model to flash and assistant.profiles.deep-work.model to pro.

Direct API integration

If your app already uses OpenAI-style chat completions, DeepSeek can slot in with mostly configuration updates. Add reasoning controls deliberately so latency and spend remain predictable.

{

"url": "https://api.deepseek.com/chat/completions",

"headers": {

"Authorization": "Bearer ${DEEPSEEK_API_KEY}",

"Content-Type": "application/json"

},

"body": {

"model": "deepseek-v4-pro",

"messages": [

{"role": "system", "content": "You are a precise engineering assistant."},

{"role": "user", "content": "Find root cause and fix this production bug."}

],

"thinking": {"type": "enabled"},

"reasoning_effort": "high",

"stream": false

}

}Recommended env variables:

DEEPSEEK_API_KEY=***

MODEL_DEFAULT=deepseek-v4-flash

MODEL_COMPLEX=deepseek-v4-pro

MODEL_ROLLBACK=gpt-5.4Exact change: replace base URL and model routing while preserving your existing request orchestration.

Bedrock-style routing layer

Many teams use an internal multi-provider gateway even if they call it “Bedrock routing.” Add DeepSeek as a first-class provider and route by policy.

{

"router": {

"providers": {

"deepseek": {

"type": "openai-compatible",

"base_url": "https://api.deepseek.com",

"api_key_env": "DEEPSEEK_API_KEY"

},

"openai": {

"type": "openai",

"api_key_env": "OPENAI_API_KEY"

}

},

"routes": {

"default": {"provider": "deepseek", "model": "deepseek-v4-flash"},

"complex": {"provider": "deepseek", "model": "deepseek-v4-pro"},

"regulated": {"provider": "openai", "model": "gpt-5.5"}

}

}

}Exact change: add DeepSeek provider and route complex workloads there while preserving a compliance-safe alternative lane.

Vertex-style orchestration

On Vertex-centric stacks, a common approach is to keep orchestration in Vertex while delegating model calls through an internal gateway. This avoids rewiring every app.

{

"vertex_orchestrator": {

"backend": "external_llm_gateway",

"gateway_url": "https://llm-gateway.internal/v1/chat/completions",

"headers": {

"X-Provider": "deepseek"

},

"model_map": {

"default": "deepseek-v4-flash",

"complex": "deepseek-v4-pro",

"rollback": "gpt-5.4"

}

}

}Exact change: update model map entries in your gateway-backed Vertex orchestration config instead of rewriting business logic.

Shared cost and risk controls you should add immediately

DeepSeek’s advantage is cost-efficient performance. Protect that advantage with hard controls from day one.

{

"controls": {

"daily_token_cap": 5000000,

"per_task_token_cap": 200000,

"timeout_ms": 120000,

"retry_limit": 2,

"escalation_rule": "flash_first_then_pro_if_confidence<0.7"

},

"compliance": {

"require_data_classification": true,

"require_region_policy_check": true

},

"metrics": [

"completion_rate",

"retry_count",

"tokens_per_completed_task",

"cost_per_completed_task"

]

}Geopolitical risk is now part of architecture. If you ignore policy and procurement constraints, you can win technical tests and still lose deployment approval.

Final rollout checklist

{

"preflight": [

"DeepSeek API key and model IDs verified",

"Route-based canary enabled (10% traffic)",

"Rollback path tested under load",

"Parser/schema compatibility validated",

"Compliance review completed"

]

}If all five are true, scale. If not, keep rollout narrow. DeepSeek v4 can be a major margin and capability lever, but only when deployed with routing discipline and policy awareness.

Now you know more than 99% of people. — Sara Plaintext