Scaling Codex to enterprises worldwide

OpenAI just dropped the enterprise version of Codex, and let's be real: this is the moment when AI stops being a cool demo at tech conferences and starts being actual infrastructure. Scaling from playground to "enterprises worldwide" is like watching your indie band suddenly get a world tour. The same technology that was impressing people on Twitter is now potentially rewriting code across Fortune 500 companies. That's either exciting or terrifying, depending on whether you're a developer or worried about your developer job.

The big move here is reliability and customization—enterprises don't want flashy, they want stable. OpenAI's talking about faster response times, better accuracy, and the ability to fine-tune Codex on proprietary codebases. Translation: your company can train an AI that actually understands your weird legacy systems and coding style guide. It's like having a junior developer who never sleeps and never complains about your naming conventions.

What's genuinely interesting is the shift from "AI writes code" to "AI writes YOUR code." The enterprise version isn't just faster—it's smarter about context. That's the real product here. Still, the elephant in the room remains: we're handing code generation to machines at scale, and the industry's still figuring out liability, security, and whether Codex accidentally memorized your proprietary algorithms. Exciting times, messy times.

OpenAI helps Hyatt advance AI among colleagues

OpenAI teaming with Hyatt is exactly the kind of AI rollout that actually matters: not a demo, not a keynote flex, just thousands of employees using ChatGPT Enterprise to move faster on real work. This is less “wow, futuristic” and more “your competitor’s operations team just got 15-25% quicker while yours is still stuck in inbox purgatory.” Boring on the surface, deadly in the market.

The smart part is deployment at the colleague level, not just leadership theater. If Hyatt is using AI across service operations, internal comms, and knowledge workflows, that compounds fast: fewer bottlenecks, faster decisions, and less time spent hunting for answers in 47 different systems. The risk, as always, is fake adoption—licenses bought, usage shallow, outcomes fuzzy.

My rating: 8.1/10 business move, 6.9/10 excitement factor. It won’t break the internet, but it might quietly break slower competitors who treat AI like a PR accessory instead of an operating system upgrade.

Codex for (almost) everything

OpenAI's Codex is basically ChatGPT's cooler younger sibling who actually ships products. While the world was still arguing about whether AI could write code, Codex was already doing it—translating plain English into Python, JavaScript, and basically anything that compiles. It's the kind of tool that makes junior developers both excited and slightly nervous about their job security.

The real flex? Codex doesn't just regurgitate Stack Overflow posts. It understands context, handles edge cases, and can pivot between languages like a linguistic acrobat. Whether you're trying to automate a spreadsheet, debug a function, or just avoid actually learning regex, Codex is there with the receipts. The "almost everything" in the title is doing heavy lifting—it's not magic, but it's close enough to make developers genuinely rethink their workflow.

The implications are wild. This isn't sci-fi anymore; it's the infrastructure for the next generation of software development. It democratizes coding in ways that'll probably reshape the entire industry, for better or worse. If you're not already poking around with it, you're already behind. Rating: 8.5/10—impressive execution, slightly overhyped messaging, but fundamentally game-changing.

Introducing GPT-Rosalind for life sciences research

GPT-Rosalind is OpenAI making a very deliberate bet: stop chasing generic “AI for everything” headlines and go straight at life sciences, where one good model-assisted insight can be worth more than a thousand chatbot demos. This is the right battlefield—higher stakes, higher margins, and way less tolerance for fake magic.

The upside is massive if it can actually accelerate literature synthesis, hypothesis generation, and experiment planning without hallucinating its way into bad science. In this sector, “pretty good” is useless; researchers need traceability, reproducibility, and outputs that survive contact with peer review. If Rosalind can cut even 20-30% off early-stage research cycles with high reliability, labs will adopt fast.

My score: 8.5/10 strategic move, 7.1/10 until we see hard validation data in the wild. Great name, great positioning, brutal execution bar. Biology is where AI hype goes to either become medicine—or become a very expensive PowerPoint.

Accelerating the cyber defense ecosystem that protects us all

OpenAI just dropped their latest white paper on cyber defense, and honestly, it reads like a Silicon Valley thriller where the heroes actually want to save the day instead of hoarding the treasure. The core thesis? AI is turbocharging cybersecurity, but only if we build it right—collectively. Translation: stop gatekeeping your threat intel and start playing nice in the sandbox.

What's refreshing here is that OpenAI isn't just flexing their muscle and claiming they've solved cybercrime. Instead, they're making the case that this ecosystem thing—where researchers, defenders, and companies actually talk to each other—is the real MVP. It's almost quaint in a world where everyone usually pretends their security posture is classified. The irony? The more we share, the safer we all get. Revolutionary stuff, apparently.

The practical angle is solid too: they're talking about using AI for threat detection, vulnerability assessment, and incident response—all the things that make security teams lose sleep. Whether you buy their optimism about AI being humanity's new shield wall or you're the paranoid type who thinks we're arming the robots, the paper at least acknowledges both sides. It's thoughtful tech advocacy, which is increasingly rare. Rating: 7.5/10—smart thinking with just enough idealism to keep you awake at night.

3 new ways Ads Advisor is making Google Ads safer and faster

Google just dropped a wellness podcast for your ad account, basically. Their new Ads Advisor features sound like they're finally letting AI do what it does best: spot the obvious stuff humans miss while stressed at 2am. Better fraud detection, faster campaign setup, and smarter recommendations? That's not revolutionary—that's table stakes in 2024. But hey, if it saves even one marketer from accidentally bidding $500 on typos, we'll take the W.

The speed angle is what actually matters here. Google's selling us faster workflows and automated safety guardrails, which translates to less time fighting the platform and more time actually strategizing. That's the real win. The fraud prevention upgrade is solid too—nobody wants to hemorrhage budget on bot clicks while sipping their third espresso of the day.

Real talk: this is Google making their own platform less of a headache to navigate. It's smart, incremental, and exactly what advertisers have been begging for since, oh, the beginning of time. Not earth-shattering, but genuinely useful. Rating: 7/10—solid feature drops that actually address real pain points, even if they're not exactly mind-blowing.

7 ways to travel smarter this summer, with help from Google

Google's summer travel tips are doing exactly what they should: making life easier without pretending AI just invented the wheel. Sure, the article wraps practical advice—flight tracking, local restaurant recommendations, real-time transit info—in shiny AI language, but let's be honest, these features have been quietly saving us from travel disasters for years. The magic here isn't that Google discovered travel planning; it's that they've bundled these tools into a frictionless experience that actually works when you need it most.

What's refreshingly non-cringey about this piece is that it doesn't oversell the tech. Google Search already knows what you're looking for before you finish typing, and now they're just leaning into that superpower to help you find flight deals, check weather in three destinations simultaneously, and discover that hidden taco truck your phone somehow trusts more than your gut. It's the kind of AI integration that proves the best tech is the kind you barely notice using.

The real takeaway? This isn't about AI being revolutionary—it's about Google making summer trips marginally less chaotic, which is honestly all we ask. No hallucinations, no existential debates, just helpful information when you need it. That's worth a solid 7/10. Nothing groundbreaking, but genuinely useful for anyone who's ever spent 45 minutes researching whether the beach is worth the drive.

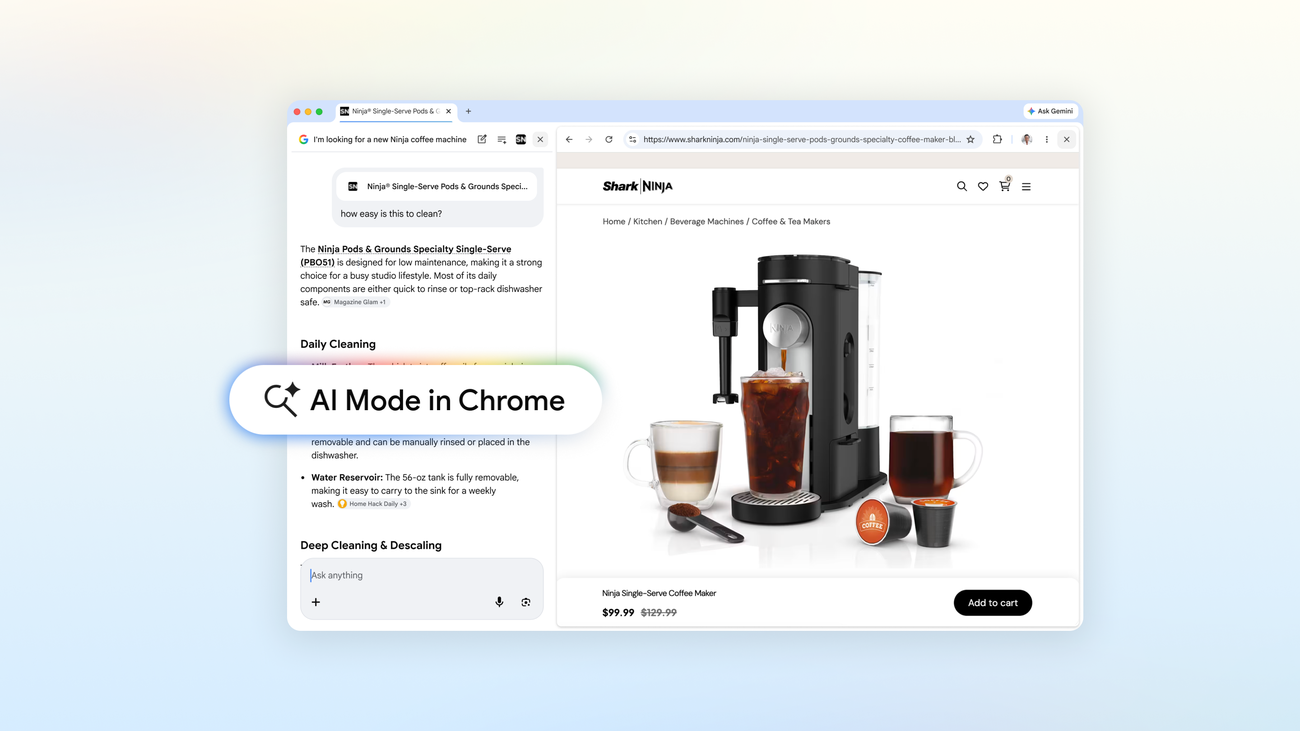

A new way to explore the web with AI Mode in Chrome

Google's rolling out "AI Mode" in Chrome, because apparently we needed another way to have our browser talk back to us. It's basically a shortcut to fire up AI without leaving your tab, which sounds convenient until you realize you're just trading one search box for another slightly shinier search box. The real question: is this innovation or just feature bloat with a machine learning skin?

Here's the thing—if this actually makes web exploration faster and smarter, it could be genuinely useful. But Google's been on a mission to inject AI into absolutely everything lately, and some of their experiments have landed with all the grace of a poorly trained chatbot. This one feels less "revolutionary" and more "we had the infrastructure, so why not?" That said, integrated AI tools are becoming table stakes, so Chrome staying competitive makes sense.

The real test is whether AI Mode actually *understands* what you're looking for or just becomes another way to get hallucinated answers dressed up in Chrome's blue and red branding. If it's the former, neat. If it's the latter, it's just search with extra steps and more confidence about being wrong.

Rating: 6.5/10 — Practical feature, predictable execution, watch out for the hallucinations.

New ways to create personalized images in the Gemini app

Google's Gemini just got a personalization glow-up, and honestly, it's the kind of feature update that makes you realize how much we've been waiting for AI to actually *know* us. The ability to create custom images tailored to your vibe means you're no longer stuck with generic AI art that looks like it was made by committee. Your banana can finally be the banana you always dreamed of—whether that's a philosophical banana, a disco banana, or a banana wearing a tiny top hat. The specificity is chef's kiss.

What's clever here is that Google isn't just slapping "personalization" on a feature and calling it a day. They're building in memory and context, which means Gemini learns what makes your creative requests tick. No more explaining your entire aesthetic philosophy every single time you want to generate something. That's the kind of friction-reducing move that separates "nice to have" from "why didn't we have this sooner?" The tech is quietly doing the heavy lifting so you don't have to.

The potential downside? We're edging closer to a world where everyone's AI outputs are increasingly tailored and siloed, which could create some interesting echo chamber effects. But for now, the upsides are winning. If you spend any time generating images in Gemini, this update isn't just an improvement—it's a pretty significant quality-of-life boost.

Rating: 7.5/10 — Solid execution of a feature that should've existed sooner, with real practical value for creative users. Not groundbreaking, but genuinely useful.

Gemini 3.1 Flash TTS: the next generation of expressive AI speech

Google's Gemini 3.1 Flash TTS is basically what happens when you ask an AI to stop sounding like a GPS from 2008. The new model promises "expressive" speech, which is Silicon Valley speak for "we finally figured out how to make robots sound less robotic." Whether it actually nails emotional nuance or just adds some aggressive pausing is the million-dollar question nobody's asking yet.

What's genuinely interesting here is the speed angle. Flash TTS does the work faster than previous generations, which means less latency between your request and hearing a robot tell you about your calendar conflicts. For real-time applications—customer service bots, interactive games, whatever—that's actually useful. The catch? Expressive AI speech is only impressive if you're not waiting three seconds for it to process.

The real test will be whether this sounds like an actual human having an actual thought, or if it's just really good at *faking* it. Spoiler alert: if you're betting on the latter, you're probably safe. Still, it's a step forward in making AI interactions feel less like talking to a very polite vending machine. Rating: 7/10 for the tech direction, minus points for the inevitable overselling of "expressiveness."

Stay sharp. — Max Signal