OpenAI helps Hyatt advance AI among colleagues

Hyatt rolling out ChatGPT Enterprise is the least sexy AI story and exactly the one that matters. No robot demo, no benchmark flex — just a giant hospitality operation trying to remove admin drag so humans can actually focus on guests.

My score: 8.4/10. Business relevance: 9.1, execution ambition: 8.6, hype control: 7.8. When finance, marketing, operations, engineering, and customer experience all get the same AI platform, that’s not a pilot — that’s an operating model shift.

The roast: enterprise case studies always hide the scoreboard. “Improved productivity” is nice; show me hard numbers on close-cycle speed, response times, and loyalty metrics. Still, this is where the real AI war is being won: not in viral threads, but in boring workflows that quietly save thousands of hours.

Codex for (almost) everything

OpenAI's Codex drop is basically the programming equivalent of handing someone a power drill who previously had to use a hammer and nail. This thing doesn't just autocomplete your code—it understands what you're trying to do and translates half-baked ideas into actual functional programs. The real flex? It works across 12+ programming languages like some kind of multilingual genius who actually remembers their high school French.

What makes this genuinely wild is the range of applications they're showing. From translating English into SQL queries to generating regex (the dark magic of programming) to building entire functions from docstrings—Codex is basically the Swiss Army knife of code generation. The demos are legitimately impressive, though the promise of "almost everything" is doing some heavy lifting in that title. It can't actually think through complex algorithms for you, and it still needs humans to not write terrible prompt instructions.

The implications are either utopia or dystopia depending on which Reddit thread you read. Junior developers might panic, but realistically, this is the future of coding—AI handling the boilerplate tedium while humans do the actual thinking. It's not going to replace programmers; it's going to make lazy ones irrelevant and productive ones way more dangerous. Rating: 8/10—genuinely transformative tech that lives up to its hype, even if the marketing name oversells it slightly.

Introducing GPT-Rosalind for life sciences research

OpenAI just dropped GPT-Rosalind, a specialized AI model built for life sciences researchers, and it's basically the science nerd's dream partner. Named after Rosalind Franklin (the crystallographer who deserves way more credit for DNA's structure), this tool promises to tackle the messy, complex world of biology research where data is messy and patterns hide like Easter eggs. It's designed to understand lab protocols, parse scientific papers, and help researchers ask smarter questions—which means fewer late nights screaming at datasets.

What makes this interesting isn't just that it exists, but how it exists. Rather than trying to be a one-size-fits-all AI, OpenAI actually built something tailored to the quirks of biomedical research. That's refreshingly practical. The real test, though? Whether bench scientists actually use it or if it becomes another shiny tool gathering dust on the shelf. The hype machine is running, but execution in real labs matters way more than a polished blog post.

For researchers tired of wrestling with data, this could be a genuine time-saver. For everyone else watching AI creep into specialized fields, it's another reminder that the future probably belongs to narrow, obsessively good tools—not jack-of-all-trades models. Smart move by OpenAI. Rating: 7.5/10—solid innovation, but we'll know if it's truly game-changing in six months when actual researchers report back.

Accelerating the cyber defense ecosystem that protects us all

OpenAI's latest move is basically the tech equivalent of handing out floodlights to guard against burglars. They're working with security researchers to deploy AI tools that fight cyber threats in real-time. Sure, it sounds noble—and it probably is—but there's a delicious irony here: the company that created ChatGPT (which every hacker immediately started weaponizing) is now positioning itself as the white knight of cybersecurity. It's like a restaurant owner who previously left the back door unlocked suddenly opening a security consulting firm.

That said, the initiative has actual merit. If AI can genuinely help defenders patch vulnerabilities faster than attackers can exploit them, we're talking about a legitimate shift in the cyber arms race. OpenAI's offering free access to certain tools for security researchers, which means the barrier to entry gets lower for the good guys. The problem? The bad guys have already shown they're pretty creative at reverse-engineering these same techniques. It's a game of technological whack-a-mole where both sides are getting smarter simultaneously.

The timing is telling, too. As regulatory scrutiny on AI intensifies, positioning yourself as the protector rather than the threat is excellent PR strategy. But cynicism aside, more resources flowing toward cyber defense isn't a bad thing. Just don't expect this to be a silver bullet. The ecosystem protecting us all? It's still held together with duct tape and prayers—now with slightly fancier AI-powered duct tape.

Rating: 6.5/10 — Good intentions with smart business optics, but don't mistake a tool upgrade for an actual game-changer.

The next evolution of the Agents SDK

OpenAI’s “next evolution of the Agents SDK” is a grown-up release for builders who are done playing demo theater. Native harness + sandbox execution + configurable memory is basically OpenAI saying, “Here’s the plumbing you were duct-taping at 2 a.m., now make it production-safe.”

My rating: 8.9/10. Developer utility: 9.3, enterprise readiness: 9.0, hype honesty: 8.2. Supporting providers like Cloudflare, Modal, Vercel, and others plus storage hooks (S3, GCS, Azure Blob, R2) is exactly the boring interoperability work that actually moves adoption.

The best part is the security posture: they’re explicitly designing for prompt-injection and exfiltration attempts, separating harness from compute, and adding snapshot/rehydration so long-running jobs don’t die with one container crash. That is practical, not sexy, and I mean that as a compliment.

The roast: SDK upgrades only matter if the docs are clean and the defaults are impossible to misuse. If teams still need a week of spelunking to get reliable state, sandbox policy, and tool routing, this becomes “great architecture, painful onboarding.” But if the DX is sharp, this is one of the most important agent infrastructure drops of the cycle.

7 ways to travel smarter this summer, with help from Google

Google's summer travel tips article is basically a masterclass in "we built something cool, so here's why you should use it." And honestly? Fair play. The seven tips lean heavily on Google's AI-powered Search features, which means you're getting a guided tour of their shiny new tools wrapped in practical vacation advice. It's marketing dressed up as helpful content, but the distinction matters less when the suggestions actually work.

The real value here is that Google's showcasing legitimate time-savers: flight price tracking, restaurant discovery with real reviews, translation features for navigating foreign menus. These aren't revolutionary, but they're genuinely useful when you're scrambling to plan a trip at 11 PM. The AI angle feels less gimmicky than "AI for AI's sake" and more like "here's how AI actually solves your travel headaches."

The downside? You're essentially reading a well-crafted ad for Google's ecosystem. But if you're already using Google Search anyway—and let's be honest, most of us are—then these tips deserve a read. Think of it as someone smart showing you features on tools you already have. Not groundbreaking, but solid summer prep material. Rating: 7/10 – helpful if you're a Google user, slightly less useful if you've sworn off the search giant.

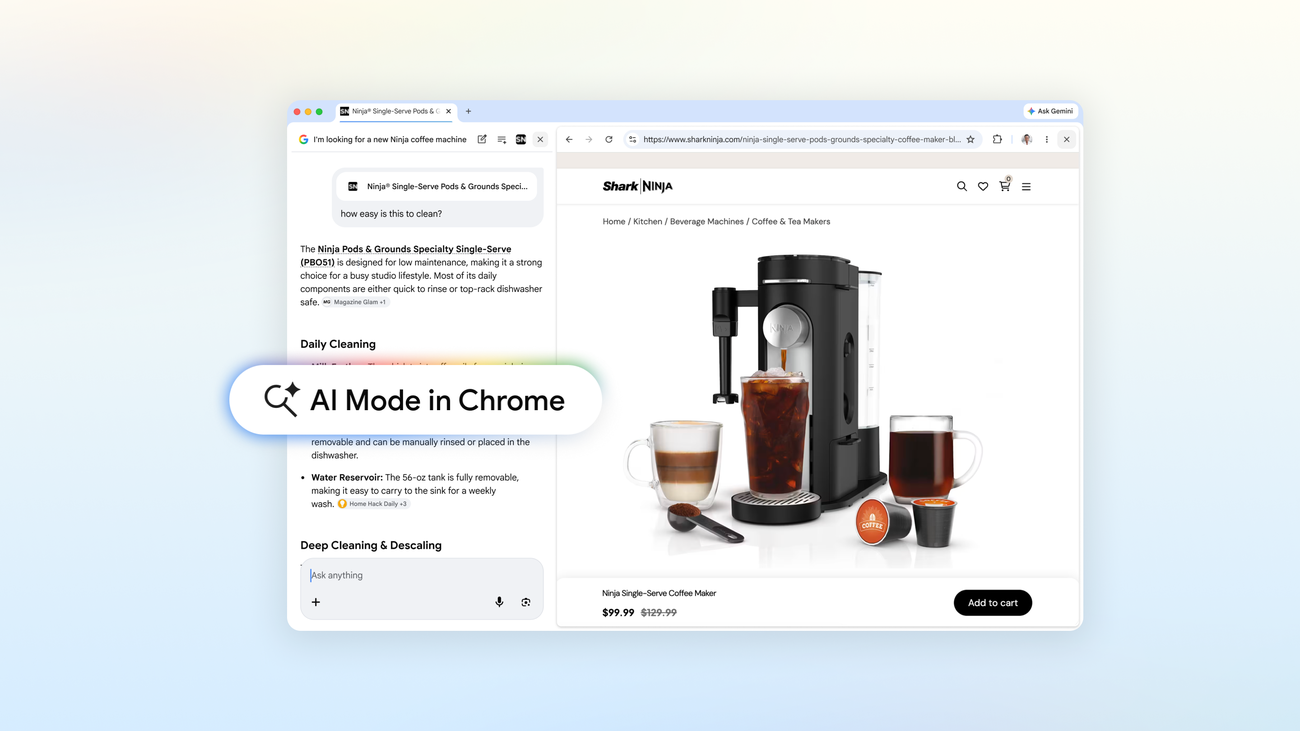

A new way to explore the web with AI Mode in Chrome

Google just dropped "AI Mode" in Chrome and honestly, it's the digital equivalent of giving your web browser a personal shopping assistant who never stops talking. The whole point is to let AI do the heavy lifting while you browse, filtering information, summarizing content, and presumably judging your life choices based on your search history. It's helpful, sure—but also a little invasive, like having a really chatty friend who reads everything over your shoulder.

The interesting part? This is Google basically admitting that sometimes you don't want to dig through search results yourself anymore. You want answers faster, cleaner, and with less clicking. Fair enough. But here's the thing: we've heard "AI will revolutionize browsing" before, and most of us just want the internet to load faster and show us memes without selling our data to the highest bidder. Still, if you're someone who gets overwhelmed by information overload, this could genuinely be useful.

Rating: 6.5/10 — Solid execution of an idea we knew was coming. It works, it's clever, and it'll probably become standard. But revolutionary? Not quite. It's more like Chrome finally got the productivity upgrade your mom thought it already had.

New ways to create personalized images in the Gemini app

Google's latest Gemini update lets you whip up personalized images faster than you can say "AI revolution." The company's pushing their on-device smarts harder than ever, running models locally so your banana-themed dream portrait doesn't have to phone home first. It's the kind of move that makes you wonder: have we finally reached the point where your phone can be your personal design studio? Spoiler alert: mostly yes, with some caveats.

The real flex here is the speed. Local processing means less lag, less data bleeding into the cloud, and more privacy for your weird AI art experiments—no judgment. But let's be honest, calling it "personalized" feels like marketing speak when it's really just "faster image generation with your preferences baked in." Still, it's a solid step forward for making AI tools feel less like cloud-dependent services and more like actual features living on your device.

Google's clearly trying to make Gemini the everything-app of AI, and moves like this show they're serious about it. If the image quality holds up and it doesn't turn your phone into a toaster oven, this could be a genuine game-changer for casual creators. Rating: 7.5/10—impressive tech, practical improvements, but still feels like Google checking boxes on their "personalization" checklist rather than breaking new ground.

Gemini 3.1 Flash TTS: the next generation of expressive AI speech

Google's Gemini 3.1 Flash TTS is basically what happens when a speech synthesis engine finally gets tired of sounding like it escaped from a 2005 GPS unit. The new model promises "expressive" AI speech, which is tech-speak for "we taught it emotions." Whether it actually *has* emotions is a philosophical question we'll leave to the sleep-deprived researchers at Google, but the results sound noticeably more natural than the robotic monotone we've all suffered through for years.

The real flex here? Speed. Flash is living up to its name with lower latency, which means less of those awkward pauses that make you wonder if your smart speaker just had a minor stroke. For developers building voice apps, this is genuinely useful—real-time conversations without the uncanny valley of waiting for the AI to formulate words. It's the kind of incremental-but-meaningful progress that doesn't make headlines but actually improves how we interact with technology.

The expressive part is where things get interesting. If Google actually nailed variable tone, pacing, and inflection, this could reshape how we experience voice assistants, audiobooks, and accessibility tools. Of course, "expressive AI" could also mean we're getting closer to the moment when we can't tell if we're talking to a human or a machine—which is either exciting or terrifying depending on your sci-fi preference. Either way, it's a solid step forward. Rating: 7.5/10—impressive tech, but let's see if it actually sounds as good as advertised.

Turn your best AI prompts into one-click tools in Chrome

Google just turned your ChatGPT obsession into browser real estate, and honestly? It's genius. "Skills in Chrome" lets you save your favorite AI prompts as one-click tools that live right there in your toolbar. No more copy-pasting prompts like you're some kind of prompt peasant. Your "write me a LinkedIn post in the voice of a motivational gym bro" masterpiece is now a permanent resident of your browser. It's peak laziness meets peak productivity, which is basically the entire AI story in 2024.

The real play here is that Google's betting on a future where your browser becomes the command center for all your AI interactions. Rather than bouncing between tabs and platforms, you just click your saved skill and boom—you're cooking. It's a small feature that hints at something bigger: the browser as your personal AI assistant hub. Whether that's revolutionary or just convenient depends on how much you've been suffering without it, but let's be real—we've all suffered.

Rating: 8/10. Clever implementation that solves an actual friction point. Deducting points only because it's Google, so we're all assuming the data harvesting feature is hidden somewhere in the terms of service. Still, if you're already elbow-deep in AI prompts, this is a legitimate quality-of-life upgrade.

Stay sharp. — Max Signal