Anthropic just released Claude Opus 4.7, and in plain English, they’re saying: this version is better at hard, real-world work, especially coding, long multi-step tasks, and image-heavy technical tasks where older models often stumble.

If you’re tired of AI launches that sound impressive but don’t change your day-to-day workflow, this update is at least pointing at the right problem. Opus 4.7 is less about writing one nice answer and more about staying accurate and useful over long runs: planning, using tools, catching mistakes, and finishing complicated tasks without constant babysitting.

That’s the core story. Anthropic moved this model to general availability, kept pricing flat versus Opus 4.6, and framed it as a meaningful reliability and capability upgrade rather than just a benchmark flex.

What actually changed

First, Anthropic says Opus 4.7 is stronger on advanced software engineering, especially difficult tasks that previously required close human supervision. In practical terms, that means things like tracing bugs across multiple files, reasoning through long implementation chains, and recovering from tool or environment errors without giving up.

Second, it supposedly follows instructions more literally and consistently. That sounds small, but it matters a lot in production environments. Earlier models might skip constraints or improvise when prompts were ambiguous. A model that adheres more tightly to instructions can reduce costly mistakes, though it also means old prompts may need retuning.

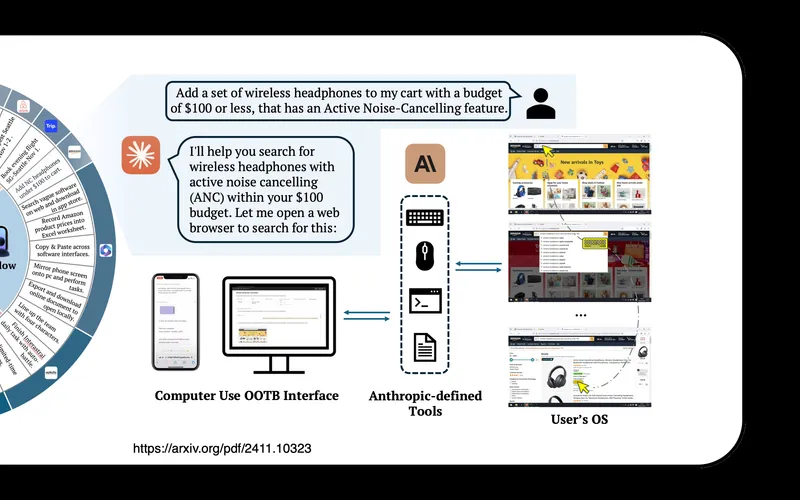

Third, vision got better. Anthropic says Opus 4.7 can handle higher-resolution images than prior Claude models. For regular users, this matters less for casual photo chat and more for dense screenshots, dashboards, diagrams, and technical docs where tiny visual details matter.

Fourth, Anthropic and partners claim better behavior under long-context pressure. In plain terms: fewer derails in long conversations, fewer weird jumps in logic, and better ability to keep track of what has already been decided.

Why this matters beyond AI enthusiasts

Most people won’t open an API dashboard, but they will use products built with these models behind the scenes. If Opus 4.7 actually improves reliability in coding and agent workflows, the ripple effects are practical:

- Faster software updates

- More bugs caught before release

- Better internal automation for support and ops teams

- More capable AI features in the apps people already use

This is important because the gap between “cool demo AI” and “production AI” is reliability. Teams don’t care if a model is brilliant 60% of the time and chaotic the other 40%. They care whether it behaves predictably enough to trust in workflows tied to money, legal risk, uptime, or customer experience.

Opus 4.7 is being positioned as a move toward that trust threshold.

The cybersecurity angle is part of the story too

Anthropic also tied this release to safety controls. They said Opus 4.7 includes safeguards designed to detect and block prohibited or high-risk cybersecurity misuse, while still creating a pathway for legitimate security professionals through a verification program.

This matters because frontier AI models are now dual-use by default. The same model that helps defenders find vulnerabilities can also help attackers if access is unmanaged. So this launch reflects a broader industry shift: labs are trying to ship stronger capabilities while tightening controls at the same time.

For regular people, that may translate into stricter access policies, more verification friction, and clearer usage boundaries on powerful models. Not always fun, but likely necessary as capability rises.

How much of this is hype?

Some of it is definitely launch framing. Every company highlights best-case testimonials from early partners. You should assume selection bias in those quotes.

But there are two reasons not to dismiss it outright. One, the reported improvements are concentrated in exactly the areas that matter for practical adoption: instruction following, tool reliability, long-horizon execution, and error recovery. Two, multiple independent product teams are describing similar gains, which is more credible than one flashy benchmark chart.

So the reasonable stance is neither blind excitement nor cynicism. It’s: promising signals, wait for broad real-world validation.

What regular users and teams should do next

If you’re an individual user, the immediate takeaway is to be more explicit in prompts. If Opus 4.7 is indeed more literal, clear constraints and success criteria will produce better results than vague requests.

If you run a team, focus on one high-value workflow where reliability matters: bug triage, technical support draft responses, document-heavy analysis, or code review assistance. Measure before-and-after outcomes: completion rate, correction rate, time-to-finish, and error severity.

If those metrics improve, scale gradually. Don’t jump straight to full autonomy on critical systems. Keep human checkpoints where failures are expensive.

Also, don’t treat this as “set and forget.” As models get better at following instructions, weak prompting and weak process design become the bottleneck. Better model + sloppy workflow still gives sloppy outcomes.

Bottom line

Claude Opus 4.7 is a significant-looking upgrade in the direction that actually matters: dependable performance on long, complex work. What happened is straightforward: Anthropic released a stronger model with better coding, better visual understanding, tighter instruction following, and built-in cyber safeguards at unchanged pricing from the prior version.

Why it matters is that AI usefulness is increasingly about reliability, not novelty. And what it means for regular people is simple: if these gains hold up, the software and services you rely on should get built and improved faster, with fewer avoidable errors, while the industry simultaneously moves toward tighter controls for high-risk capabilities.

That’s the real shift. We’re moving from “AI that impresses in chat” to “AI that can be trusted in workflow.”

Now you know more than 99% of people. — Sara Plaintext